GSP1346

Overview

Working with big datasets often involves transforming data into assets tailored to the needs of a specific project, and tracking all of the data transformations across multiple tools in your system can be tricky. Data lineage in Knowledge Catalog is a solution that provides a mechanism to:

-

Capture data journeys: Track and review how data is sourced, transformed, and moving through your systems with the help of lineage graphs.

-

Analyze root causes: Trace errors related to entries and data operations back to their root causes.

-

Conduct impact analyses: Enable better change management through thorough review of the downstream impacts of data updates, which can help you to avoid downtime or unexpected errors, understand dependent entries, and collaborate with relevant stakeholders.

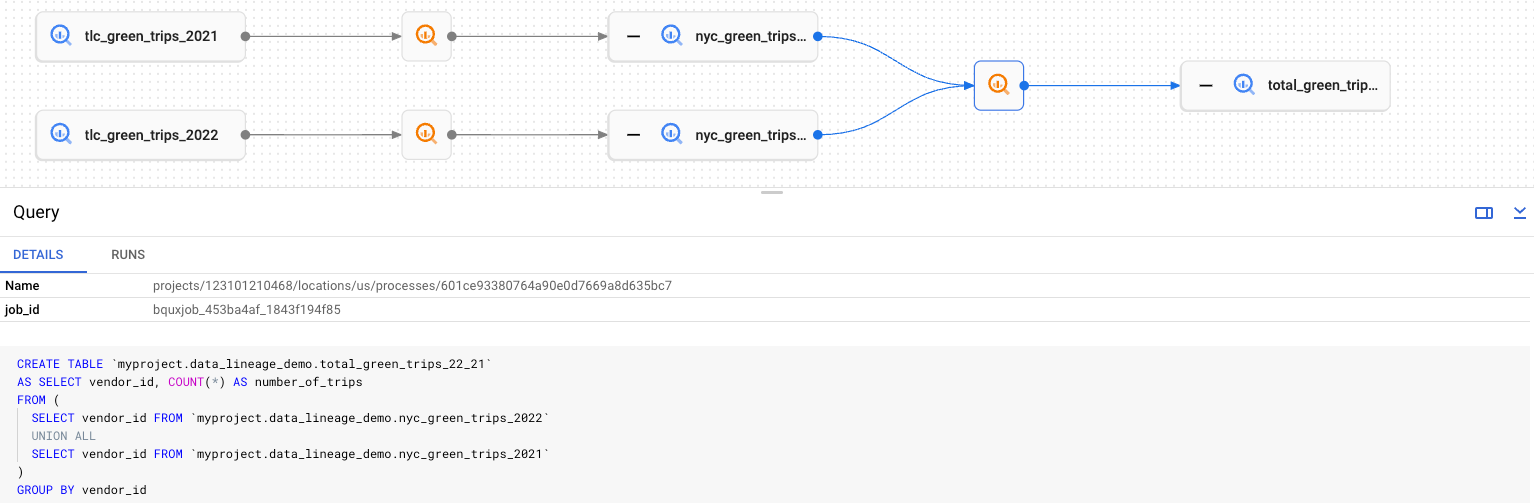

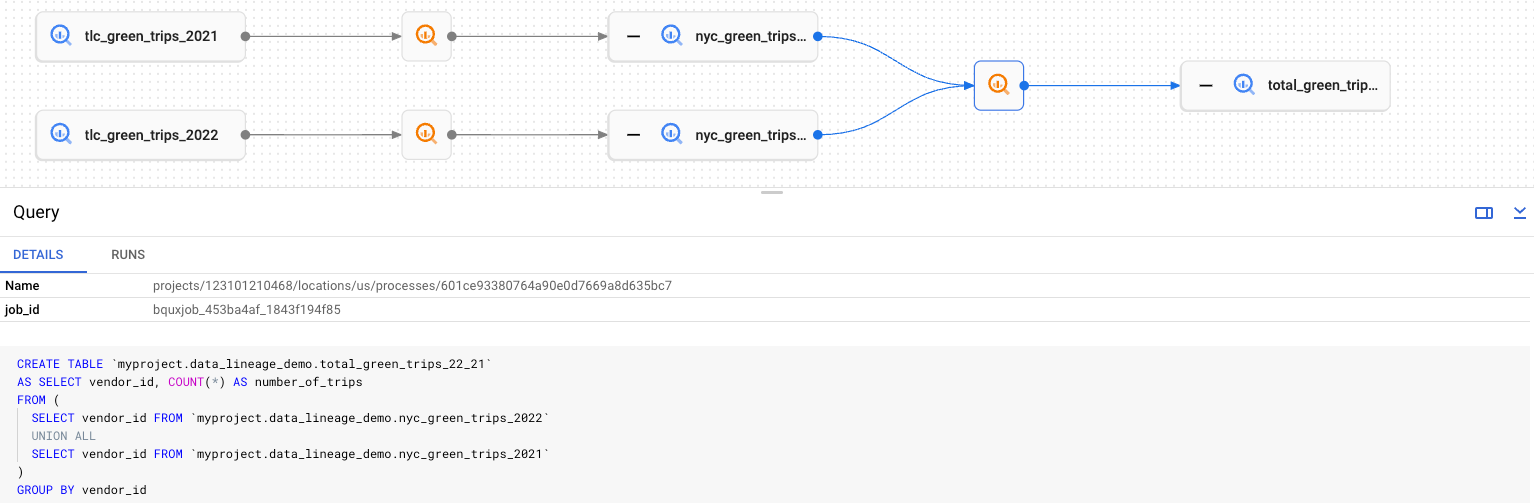

In Knowledge Catalog, you can review the lineage graph to see the lineage that has been natively gathered or manually added through the Data Lineage API. The lineage graph displays the upstream and downstream lineage of a root entry, which is the current entry that you are viewing. For example, the lineage graph below shows two data assets that are combined into a new data asset through a SQL query that includes a UNION statement. The two combined data assets are each part of the upstream lineage of the combined table, which is in the downstream lineage of each of the original tables.

Furthermore, through the Data Lineage API, you can import OpenLineage events to manually add additional lineage, alongside the lineage captured from natively supported Google Cloud services such as BigQuery, Dataflow, Managed Service for Apache Spark, Data Fusion, Cloud Composer, and Agent Platform. OpenLineage is an open platform for collecting and analyzing data lineage information that is used by data professionals to capture lineage events from data pipeline components which use an OpenLineage API such as Apache Airflow, Apache Spark, and dbt. With the Data Lineage API, you can manually add lineage information for any non-natively supported data sources, including data transformations jobs that occurred outside of the Google Cloud project.

In this lab, you start with a BigQuery dataset that has been loaded from data transformed outside of the Google Cloud project. Specifically, there are 89 BigQuery tables already loaded in a dataset named qwiklabs_bank that contain various data on bank customers, ATM transactions, loans, and more. These tables have been created through Apache Spark jobs outside of the Google Cloud project, and therefore, the lineage is not already captured. Throughout the lab, you run an additional Managed Service for Apache Spark job to transform the data further with the lineage captured. Then, you use data lineage graphs and the Data Lineage API to conduct impact analysis through exploring the downstream implications of updating specific tables and to add an OpenLineage event to capture data transformations from Apache Spark jobs executed outside of the Google Cloud project.

What you'll do

In this lab, you learn how to:

- Run a Serverless for Apache Spark job in Managed Service for Apache Spark to transform data with the lineage captured.

- Create an aspect type for critical data and add the aspect to BigQuery tables.

- Conduct impact analysis using data lineage graphs and the Data Lineage API to explore the downstream implications of data updates.

- Submit a Data Lineage API request to pull the downstream lineage and dependencies of an entry.

- Enter a one-off OpenLineage event into Knowledge Catalog data lineage.

Prerequisites

To complete this lab successfully, it is recommended that you have some foundational knowledge of data lineage and Knowledge Catalog, specifically:

- Understand the basic concepts of data lineage and why it is useful to document transformations from any processes that impact your data

- Be familiar with Knowledge Catalog fundamentals including how to use aspect types to capture metadata about assets.

Setup and requirements

Before you click the Start Lab button

Read these instructions. Labs are timed and you cannot pause them. The timer, which starts when you click Start Lab, shows how long Google Cloud resources are made available to you.

This hands-on lab lets you do the lab activities in a real cloud environment, not in a simulation or demo environment. It does so by giving you new, temporary credentials you use to sign in and access Google Cloud for the duration of the lab.

To complete this lab, you need:

- Access to a standard internet browser (Chrome browser recommended).

Note: Use an Incognito (recommended) or private browser window to run this lab. This prevents conflicts between your personal account and the student account, which may cause extra charges incurred to your personal account.

- Time to complete the lab—remember, once you start, you cannot pause a lab.

Note: Use only the student account for this lab. If you use a different Google Cloud account, you may incur charges to that account.

How to start your lab and sign in to the Google Cloud console

-

Click the Start Lab button. If you need to pay for the lab, a dialog opens for you to select your payment method.

On the left is the Lab Details pane with the following:

- The Open Google Cloud console button

- Time remaining

- The temporary credentials that you must use for this lab

- Other information, if needed, to step through this lab

-

Click Open Google Cloud console (or right-click and select Open Link in Incognito Window if you are running the Chrome browser).

The lab spins up resources, and then opens another tab that shows the Sign in page.

Tip: Arrange the tabs in separate windows, side-by-side.

Note: If you see the Choose an account dialog, click Use Another Account.

-

If necessary, copy the Username below and paste it into the Sign in dialog.

{{{user_0.username | "Username"}}}

You can also find the Username in the Lab Details pane.

-

Click Next.

-

Copy the Password below and paste it into the Welcome dialog.

{{{user_0.password | "Password"}}}

You can also find the Password in the Lab Details pane.

-

Click Next.

Important: You must use the credentials the lab provides you. Do not use your Google Cloud account credentials.

Note: Using your own Google Cloud account for this lab may incur extra charges.

-

Click through the subsequent pages:

- Accept the terms and conditions.

- Do not add recovery options or two-factor authentication (because this is a temporary account).

- Do not sign up for free trials.

After a few moments, the Google Cloud console opens in this tab.

Note: To access Google Cloud products and services, click the Navigation menu or type the service or product name in the Search field.

Activate Cloud Shell

Cloud Shell is a virtual machine that is loaded with development tools. It offers a persistent 5GB home directory and runs on the Google Cloud. Cloud Shell provides command-line access to your Google Cloud resources.

-

Click Activate Cloud Shell  at the top of the Google Cloud console.

at the top of the Google Cloud console.

-

Click through the following windows:

- Continue through the Cloud Shell information window.

- Authorize Cloud Shell to use your credentials to make Google Cloud API calls.

When you are connected, you are already authenticated, and the project is set to your Project_ID, . The output contains a line that declares the Project_ID for this session:

Your Cloud Platform project in this session is set to {{{project_0.project_id | "PROJECT_ID"}}}

gcloud is the command-line tool for Google Cloud. It comes pre-installed on Cloud Shell and supports tab-completion.

- (Optional) You can list the active account name with this command:

gcloud auth list

- Click Authorize.

Output:

ACTIVE: *

ACCOUNT: {{{user_0.username | "ACCOUNT"}}}

To set the active account, run:

$ gcloud config set account `ACCOUNT`

- (Optional) You can list the project ID with this command:

gcloud config list project

Output:

[core]

project = {{{project_0.project_id | "PROJECT_ID"}}}

Note: For full documentation of gcloud, in Google Cloud, refer to the gcloud CLI overview guide.

Enable the necessary APIs

- In the Google Cloud console title bar, type Data Lineage API in the Search field, and then click Data Lineage API in the search results.

If the Data Lineage API has not been enabled, click Enable.

- Repeat step 1 to check if the Cloud Dataplex API has been enabled.

If the Cloud Dataplex API has not been enabled, click Enable.

- In Cloud Shell, run the following command to force restart Dataproc API:

gcloud services disable dataproc.googleapis.com --force

gcloud services enable dataproc.googleapis.com

Task 1. Run a Serverless for Apache Spark job in Managed Service for Apache Spark with lineage captured

Google Cloud Serverless for Apache Spark workloads and sessions are natively integrated with Knowledge Catalog, allowing you to automatically capture data lineage events after you enable data lineage either at the project level or for a specific workflow or session. Once lineage is enabled, these events are captured and published to Knowledge Catalog.

In this task, you enable data lineage on Google Cloud Serverless for Apache Spark workloads at the project level, and then you run a Serverless for Apache Spark job in Managed Service for Apache Spark to review the captured lineage in a later task.

Set metadata keys and values for Managed Service for Apache Spark

To enable data lineage at the project level, you can set custom project metadata for Managed Service for Apache Spark. After you set these values, all subsequent Spark batch workloads and interactive sessions that you run in the project will have data lineage enabled.

-

In the Google Cloud console, on the Navigation menu ( ), select Compute Engine.

), select Compute Engine.

-

On the left menu pane, under Settings, click Metadata.

-

Click Edit, and then click Add item.

-

For Key 4, enter: DATAPROC_LINEAGE_ENABLED

-

For Value 4, enter: true

-

Click Add item again to add another key-value pair:

| Key 5 |

Value 5 |

| DATAPROC_CLUSTER_SCOPES |

https://www.googleapis.com/auth/cloud-platform |

- Click Save.

Run the Serverless for Apache Spark job in Managed Service for Apache Spark

Now that data lineage has been enabled at the project level, you can run a Serverless for Apache Spark job in Managed Service for Apache Spark with lineage captured.

- In Cloud Shell, run the following code to set the variables for project ID and project number:

PROJECT_ID=$(gcloud config get-value project)

PROJECT_NUMBER=$(gcloud projects describe $PROJECT_ID --format='value(projectNumber)')

- Run the following code to create a variable for region and use it to configure the region for Managed Service for Apache Spark and Compute Engine:

export REGION={{{project_0.default_region | "filled in at lab start"}}}

gcloud config set dataproc/region $REGION

gcloud config set compute/region $REGION

- Run the following to create a bucket for the Managed Service for Apache Spark job:

export BUCKET_NAME="${PROJECT_ID}-bucket"

gcloud storage buckets create gs://$BUCKET_NAME --location=$REGION

- Run the following to copy the necessary script files to the local directory in Cloud Shell:

gcloud storage cp gs://spls/gsp1346/scripts/* .

- Run the following to list the script files that have been copied to the local directory in Cloud Shell:

ls -la *.py

In this lab environment, the BigQuery dataset named qwiklabs_bank contains tables that have been created from previous executions of qwiklabs_bank_1.py through qwiklabs_bank_9.py, so you only need to run qwiklabs_bank_10.py.

In the next steps, after you grant the necessary role to the service account and enable Private Google Access, you execute qwiklabs_bank_10.py.

- Open and review

qwiklabs_bank_10.py using Nano to learn how data is transformed in the script:

nano qwiklabs_bank_10.py

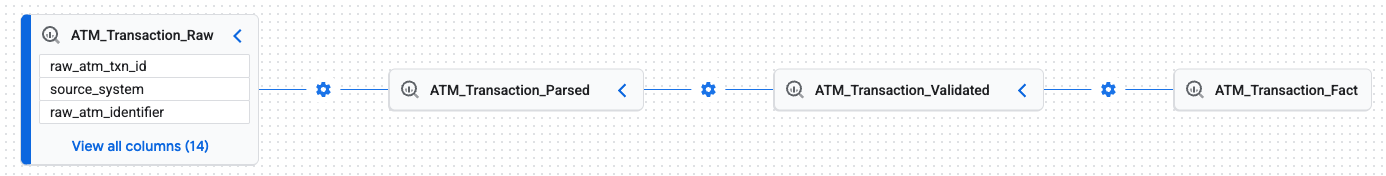

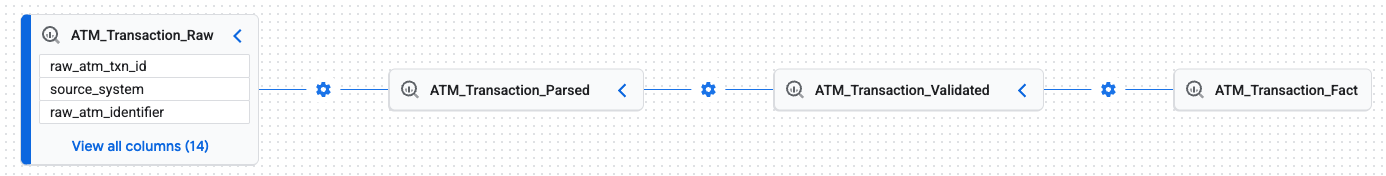

As you scroll in the file, notice that one section in this script begins with raw ATM transaction data and completes some transformations to validate and finalize the ATM transaction data. In a later task, you explore the lineage for this ATM transaction data.

For example:

# Table 93: Parsed ATM Transaction Data

df_parsed = (

read_table(spark, "qwiklabs_bank.ATM_Transaction_Raw")

.select(

"raw_atm_txn_id",

"source_system",

F.trim("raw_atm_identifier").alias("raw_atm_identifier"),

F.trim("raw_card_id").alias("raw_card_id"),

F.trim("raw_account_id").alias("raw_account_id"),

F.trim("txn_type_raw").alias("atm_txn_type_code"),

F.to_timestamp("txn_timestamp_text").alias("transaction_timestamp"),

F.col("requested_amount_text")

.cast(DecimalType(18, 2))

.alias("requested_amount"),

F.col("dispensed_amount_text")

.cast(DecimalType(18, 2))

.alias("dispensed_amount"),

F.col("deposit_amount_text")

.cast(DecimalType(18, 2))

.alias("deposit_amount"),

F.upper(F.trim("currency_raw")).alias("currency_code"),

F.trim("response_code_raw").alias("response_code"),

F.trim("auth_code_raw").alias("authorization_code"),

)

.withColumn("parsing_timestamp", F.current_timestamp())

)

save_table(df_parsed, "qwiklabs_bank.ATM_Transaction_Parsed")

-

Press CTRL+X to close the file.

-

Next, run the following to give the Storage Admin role to the Compute Engine default service account:

gcloud projects add-iam-policy-binding $PROJECT_ID \

--member=serviceAccount:$PROJECT_NUMBER-compute@developer.gserviceaccount.com \

--role=roles/storage.admin

- Now, enable Private Google Access on your subnetwork by running the following command:

gcloud compute networks subnets update default --region=$REGION --enable-private-ip-google-access

- Last, run the Managed Service for Apache Spark job using

qwiklabs_bank_10.py:

gcloud dataproc batches submit pyspark qwiklabs_bank_10.py \

--region=$REGION \

--properties=spark.dataproc.lineage.enabled=true \

--deps-bucket=$BUCKET_NAME

Note: If you receive an error related to the service account permissions (which you assigned in step 5), wait a few minutes, and then try to run the job again.You can safely ignore the warning for No runtime version specified. Using the default runtime version. and proceed with the lab instructions. If you receive any other Managed Service for Apache Spark errors, be sure that you have restarted the Dataproc API following the instructions in the previous section named Enable the necessary APIs.

Note: It can take a few minutes after the Managed Service for Apache Spark job is completed before the progress check returns a successful message.

Run a Serverless for Apache Spark job in Managed Service for Apache Spark with lineage captured

Task 2. Create an aspect type for critical data and add the aspect to BigQuery tables

In Knowledge Catalog, aspects allow you to add custom metadata to assets for easy identification and retrieval, such as adding an aspect to certain assets to identify that they contain critical or sensitive data. You can also create reusable aspect types to rapidly add the same aspects to different data assets.

In this task, you create an aspect type for critical data and add the aspect to BigQuery tables that you want to label as critical data. In later tasks, you use the aspects to identify the downstream implications of data updates to specific tables, based on whether their downstream entries have an aspect for critical data.

Create an aspect type for critical data

-

In the Google Cloud console, on the Navigation menu ( ), click View all products. Under Analytics, click Knowledge Catalog.

), click View all products. Under Analytics, click Knowledge Catalog.

-

On the left menu pane, under Manage Metadata, click Metadata types.

-

Click Create in the Aspect Types pane.

-

Enter the required information to define the aspect type:

| Property |

Value |

| Display Name |

Critical Data Aspect |

| Location |

|

- In the Template section, click Add field and enter the required information to add a new field to the aspect type:

| Property |

Value |

| Field Display Name |

Critical Data Flag |

| Type |

Enum |

-

Select the Is Required checkbox.

-

Under Enum values, click Add an enum value.

-

For Value, type: Yes

-

Click Done.

-

Click Add an enum value again.

-

For Value, type: No

-

Click Done.

-

Click Save.

Note: It can take a few minutes after the aspect type is created before the progress check returns a successful message.

Create an aspect type for critical data

Add a critical data aspect to BigQuery tables

-

On the left menu pane, under Discover, click Search.

-

On the Search title bar, select Knowledge Catalog.

-

For Filters > Systems, select the BigQuery checkbox.

-

In the search box for Find resources across projects, enter ATM_Transaction_Fact, and then click ATM_Transaction_Fact from the search results.

If you do not see the ATM_Transaction_Fact table, make sure that the search platform is selected as Knowledge Catalog on the top right.

-

Scroll down to the Aspects section. Next to Optional aspects, click Add.

-

Type Critical Data Aspect in the Filter field, and then click the Critical Data Aspect aspect from the results.

-

For Critical Data Flag, select Yes.

-

Click Save.

-

Repeat steps 4-8 for the table named Customer_Interaction_Summary.

Note: It can take a few minutes after the aspect type is added to the tables before the progress check returns a successful message.

Add an aspect to BigQuery tables

Task 3. Conduct impact analysis using data lineage graphs to explore the implications of data updates

Data lineage views are available for Knowledge Catalog entries, BigQuery assets, and Agent Platform resources. These lineage views are provided in graph and table formats and are very useful resources for conducting impact analysis to proactively identify downstream assets that could be affected by changes to a source table. Specifically, these lineage views allow you to visualize data asset flow and relationships, which is crucial for tracking data dependencies and understanding the full journey of a data asset.

In this task, you conduct impact analysis by visually exploring the data lineage graphs for specific BigQuery tables (some of which were transformed by the job you ran in Task 1) to identify whether there are any downstream implications of data updates to the reviewed tables.

Review the lineage for Customer_Master

-

In the Google Cloud console, on the Navigation menu ( ), select BigQuery.

), select BigQuery.

-

In the Explorer pane, expand the arrow next to the project ID (), and then expand the arrow next to the dataset named qwiklabs_bank.

-

Under the dataset named qwiklabs_bank, click the table named Customer_Master.

-

In the table details pane, click the Lineage tab.

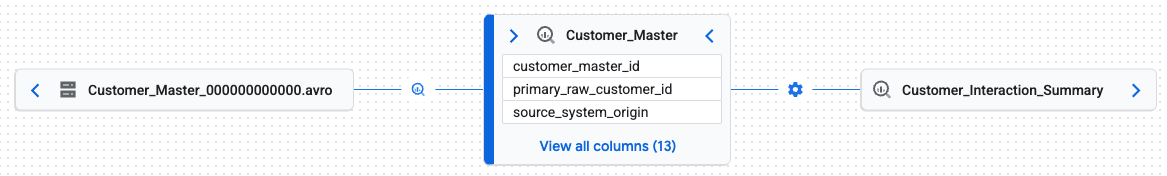

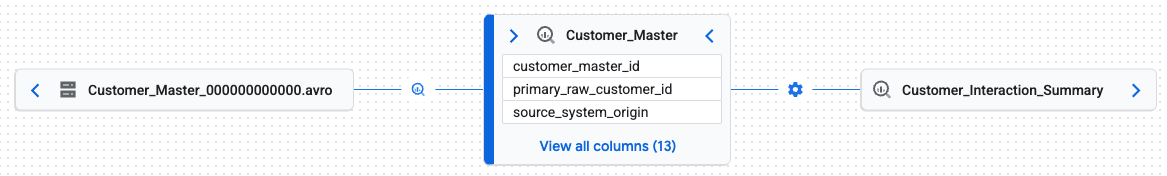

In the Graph view, notice that the table has an upstream entry that is an AVRO file, and a downstream entry named Customer_Interaction_Summary.

In addition to the Graph view, you can also review the List view to see the upstream and downstream entries for the table.

- On the tab menu bar above the graph, click List (next to Graph) to see the list view for lineage.

Notice that the same information as the Graph view is now provided as a table.

-

Click Graph to return to the graph view for Customer_Master, and click the box for the downstream entry named Customer_Interaction_Summary.

-

In the details pane for Customer_Interaction_Summary, scroll down to Aspects and review which aspects have been added to this table.

Based on the lineage information for Customer_Master and Customer_Interaction_Summary, answer the following question.

Review the lineage for ATM_Transaction_Raw

- Repeat steps 3-7 in the previous section to review the lineage for the table named ATM_Transaction_Raw, and answer the following question.

Be sure to click the Expand arrow for each downstream entry to see the full lineage for ATM_Transaction_Raw, which includes three downstream entries ending with ATM_Transaction_Fact.

Identify the Fully Qualified Name (FQN) for ATM_Transaction_Raw

-

In the Graph view, click on the box for ATM_Transaction_Raw.

-

In the details menu (right side), locate the section named Identifiers, and click the copy button for FQN, which is the fully qualified name provided as bigquery:project_id.dataset_name.table_name.

Note: To see the FQN value, you can paste the copied FQN into Cloud Shell.

You use the FQN in the next task to programmatically pull the downstream lineage data for this table.

Task 4. Submit a Data Lineage API request to pull the downstream lineage and identify dependencies

In addition to visually reviewing the lineage views available for some assets such as BigQuery tables, you can use the Data Lineage API to programmatically pull downstream lineage and related metadata about any asset that has associated data lineage.

In this task, you send requests to the Data Lineage API to programmatically pull the downstream lineage and dependencies of an entry (rather than visually reviewing the data lineage graph in BigQuery) as well as review the associated metadata for dependencies such as aspects that have been added to the assets.

Pull downstream lineage for ATM_Transaction_Raw

- In Cloud Shell, configure authorization to make calls to the Data Lineage API:

alias gcurl='curl -H "Authorization: Bearer $(gcloud auth print-access-token)" -H "Content-Type: application/json"'

- Run the following to pull downstream lineage for the table named ATM_Transaction_Raw.

gcurl -X POST https://datalineage.googleapis.com/v1/projects/{{{project_0.project_id | Project ID}}}/locations/{{{project_0.default_region | Region}}}:searchLinks -d "$(cat <<EOF

{

"source": { "fullyQualifiedName": "bigquery:{{{project_0.project_id | Project ID}}}.qwiklabs_bank.ATM_Transaction_Raw"

}

}

EOF

)"

The output includes a section that resembles the following:

{

"source": {

"fullyQualifiedName": "bigquery:qwiklabs-gcp-00-3a54f469b10e.qwiklabs_bank.ATM_Transaction_Raw"

},

...

"target": {

"fullyQualifiedName": "bigquery:qwiklabs-gcp-00-3a54f469b10e.qwiklabs_bank.ATM_Transaction_Parsed"

}

From this API request, you have identified that the first entry in the downstream lineage for ATM_Transaction_Raw is the table named ATM_Transaction_Parsed.

Pull downstream lineage for ATM_Transaction_Parsed

- Repeat step 2 from the previous section to pull the downstream lineage for the table named ATM_Transaction_Parsed.

gcurl -X POST https://datalineage.googleapis.com/v1/projects/{{{project_0.project_id | Project ID}}}/locations/{{{project_0.default_region | Region}}}:searchLinks -d "$(cat <<EOF

{

"source": { "fullyQualifiedName": "bigquery:{{{project_0.project_id | Project ID}}}.qwiklabs_bank.ATM_Transaction_Parsed"

}

}

EOF

)"

The output includes a section that resembles the following:

{

"source": {

"fullyQualifiedName": "bigquery:qwiklabs-gcp-00-3a54f469b10e.qwiklabs_bank.ATM_Transaction_Parsed"

},

...

"target": {

"fullyQualifiedName": "bigquery:qwiklabs-gcp-00-3a54f469b10e.qwiklabs_bank.ATM_Transaction_Validated"

}

From this API request, you have identified that the second entry in the downstream lineage for ATM_Transaction_Raw is the table named ATM_Transaction_Validated.

Pull downstream lineage for ATM_Transaction_Validated

- Repeat the step from the previous section to pull the downstream lineage for the table named ATM_Transaction_Validated.

gcurl -X POST https://datalineage.googleapis.com/v1/projects/{{{project_0.project_id | Project ID}}}/locations/{{{project_0.default_region | Region}}}:searchLinks -d "$(cat <<EOF

{

"source": { "fullyQualifiedName": "bigquery:{{{project_0.project_id | Project ID}}}.qwiklabs_bank.ATM_Transaction_Validated"

}

}

EOF

)"

The output includes a section that resembles the following:

{

"source": {

"fullyQualifiedName": "bigquery:qwiklabs-gcp-00-3a54f469b10e.qwiklabs_bank.ATM_Transaction_Validated"

},

...

"target": {

"fullyQualifiedName": "bigquery:qwiklabs-gcp-00-3a54f469b10e.qwiklabs_bank.ATM_Transaction_Fact"

}

From this API request, you have identified that the third entry in the downstream lineage for ATM_Transaction_Raw is the table named ATM_Transaction_Fact.

Pull downstream lineage for ATM_Transaction_Fact

- Repeat the step from the previous section to pull the downstream lineage for the table named ATM_Transaction_Fact.

gcurl -X POST https://datalineage.googleapis.com/v1/projects/{{{project_0.project_id | Project ID}}}/locations/{{{project_0.default_region | Region}}}:searchLinks -d "$(cat <<EOF

{

"source": { "fullyQualifiedName": "bigquery:{{{project_0.project_id | Project ID}}}.qwiklabs_bank.ATM_Transaction_Fact"

}

}

EOF

)"

Notice that this API request does not produce an output. From this API request, you have identified that ATM_Transaction_Fact is the final downstream entry for ATM_Transaction_Raw.

Get the Knowledge Catalog entry name for ATM_Transaction_Fact

- Run the following to identify the Knowledge Catalog entry name for ATM_Transaction_Fact:

gcurl -X POST https://dataplex.googleapis.com/v1/projects/{{{project_0.project_id | Project ID}}}/locations/{{{project_0.default_region | Region}}}:searchEntries -d "$(cat <<EOF

{

"pageSize": 3,

"query": "bigquery:{{{project_0.project_id | Project ID}}}.qwiklabs_bank.ATM_Transaction_Fact"

}

EOF

)"

The output includes a section that resembles the following:

{

"results": [

{

"dataplexEntry": {

"name": "projects/36248353385/locations/europe-west1/entryGroups/@bigquery/entries/bigquery.googleapis.com/projects/qwiklabs-gcp-00-3a54f469b10e/datasets/qwiklabs_bank/tables/ATM_Transaction_Fact",

...

"fullyQualifiedName": "bigquery:qwiklabs-gcp-00-3a54f469b10e.qwiklabs_bank.ATM_Transaction_Fact",

...

}

In the example above, the Knowledge Catalog entry name is:

projects/36248353385/locations/europe-west1/entryGroups/@bigquery/entries/bigquery.googleapis.com/projects/qwiklabs-gcp-00-3a54f469b10e/datasets/qwiklabs_bank/tables/ATM_Transaction_Fact

- Copy the Knowledge Catalog entry name from your results, so that you can use it in the next section to retrieve additional metadata.

Get metadata for Knowledge Catalog entry for ATM_Transaction_Fact to identify aspects

- Paste the Knowledge Catalog entry name you copied in previous section into code below by replacing DATAPLEX_ENTRY_NAME (be sure to leave

? after the DATAPLEX_ENTRY_NAME):

gcurl -X GET https://dataplex.googleapis.com/v1/DATAPLEX_ENTRY_NAME?view=ALL

The output includes a section that resembles the following:

{

"name": "projects/36248353385/locations/europe-west1/entryGroups/@bigquery/entries/bigquery.googleapis.com/projects/qwiklabs-gcp-00-3a54f469b10e/datasets/qwiklabs_bank/tables/ATM_Transaction_Fact",

"entryType": "projects/655216118709/locations/global/entryTypes/bigquery-table",

...

"aspects": {

"36248353385.europe-west1.critical-data-aspect": {

"aspectType": "projects/36248353385/locations/europe-west1/aspectTypes/critical-data-aspect",

"createTime": "2025-10-10T19:06:16.709668Z",

"updateTime": "2025-10-10T19:06:16.709668Z",

"data": {

"critical-data-flag": "Yes"

},

...

Notice the critical data flag with a value of Yes for ATM_Transaction_Fact.

From these API requests, you have programmatically determined that updating or deleting the table named ATM_Transaction_Raw has downstream implications for a table with a critical data aspect (ATM_Transaction_Fact).

Submit a Data Lineage API request to pull the downstream lineage

Task 5. Enter a one-off OpenLineage event using the Data Lineage API

In addition to programmatically pulling downstream lineage through the Data Lineage API, you can also use the API to import OpenLineage events for data transformations that occurred outside of your current Google Cloud project. For assets that have data lineage views such as BigQuery tables, you can also review these imported events in the graph or table views for lineage.

In this task, you use the Data Lineage API to add an OpenLineage event to capture data transformations from an Apache Spark job executed outside of the Google Cloud project. Then, you return to BigQuery to see the lineage view for the OpenLineage event you added through the API.

- Return to BigQuery, and repeat steps 2-7 from the task titled Review lineage graphs in Knowledge Catalog to explore the downstream implications of data updates to visually review the lineage graph for Customer_Data_Parsed.

Notice that the only upstream lineage for this table is an AVRO file that was used during the import to BigQuery.

- In Cloud Shell, review

qwiklabs_bank_1.py using Nano to explore how Customer_Data_Raw was used to create Customer_Data_Parsed (lines 282-308):

nano qwiklabs_bank_1.py

As you scroll in the file, notice that one section in this script begins with raw customer data and completes some transformations to parse the data into a new table.

For example:

# Table 10: Customer_Data_Parsed

df_raw = (

spark.read.format("bigquery")

.option("table", "qwiklabs_bank.Customer_Data_Raw")

.load()

)

df_parsed = df_raw.select(

F.col("raw_customer_id"),

F.col("source_system"),

F.col("first_name"),

F.col("last_name"),

F.to_date(F.col("date_of_birth_text"), "yyyy-MM-dd").alias(

"date_of_birth"

),

F.col("email_address"),

F.col("phone_number"),

F.col("raw_address_line1"),

F.col("raw_address_line2"),

F.col("raw_city"),

F.col("raw_state_province"),

F.col("raw_postal_code"),

F.upper(F.col("raw_country_code")).alias("country_code_alpha2"),

F.upper(F.col("initial_status_code")).alias("status_code"),

F.col("ingestion_timestamp").alias("raw_ingestion_timestamp"),

F.col("processing_batch_id"),

).withColumn("parsing_timestamp", F.current_timestamp())

save_table(df_parsed, "qwiklabs_bank.Customer_Data_Parsed")

-

Press CTRL+X to close the file.

-

Run the following command to post a one-off OpenLineage event into the lineage for Customer_Data_Parsed.

gcurl -X POST https://datalineage.googleapis.com/v1/projects/{{{project_0.project_id | Project ID}}}/locations/{{{project_0.default_region | Region}}}:processOpenLineageRunEvent \

--data '{"eventTime":"2023-04-04T13:21:16.098Z","eventType":"COMPLETE","inputs":[{"name":"{{{project_0.project_id | Project ID}}}.qwiklabs_bank.Customer_Data_Raw","namespace":"bigquery"}],"job":{"name":"somename","namespace":"somenamespace"},"outputs":[{"name":"{{{project_0.project_id | Project ID}}}.qwiklabs_bank.Customer_Data_Parsed","namespace":"bigquery"}],"producer":"https://github.com/OpenLineage/OpenLineage/blob/v1-0-0/client","run":{"runId":"4321"},"schemaURL":"https://openlineage.io/spec/1-0-5/OpenLineage.json#/$defs/RunEvent"}'

Note: If you receive an error related to authorization, repeat the first step in the task named Submit a Data Lineage API request to pull the downstream lineage and identify dependencies to reconfigure authorization to make calls to the Data Lineage API.

- Return to BigQuery, and visually review the updated lineage for Customer_Data_Parsed (refresh the page as needed).

Notice that there is a new upstream entry capturing that Customer_Data_Raw was used to create Customer_Data_Parsed.

Enter a one-off OpenLineage event using the Data Lineage API

Want more practice with entering a one-off OpenLineage event using the Data Lineage API?

Repeat the steps in Task 5 to review qwiklabs_bank_2.py, and then use the Data Lineage API to capture that Customer_Data_Parsed was used to create Customer_Data_Validated.

Congratulations!

In this lab, you executed a Serverless for Apache Spark job in Managed Service for Apache Spark with lineage captured and visually reviewed data lineage graphs in BigQuery. Then, you used the Data Lineage API to explore the downstream implications of updating tables and to add an OpenLineage event to capture data transformations from non-integrated services such as Apache Spark jobs executed outside of the Google Cloud project.

Next steps / Learn more

Google Cloud training and certification

...helps you make the most of Google Cloud technologies. Our classes include technical skills and best practices to help you get up to speed quickly and continue your learning journey. We offer fundamental to advanced level training, with on-demand, live, and virtual options to suit your busy schedule. Certifications help you validate and prove your skill and expertise in Google Cloud technologies.

Manual Last Updated May 04, 2026

Lab Last Tested May 04, 2026

Copyright 2026 Google LLC. All rights reserved. Google and the Google logo are trademarks of Google LLC. All other company and product names may be trademarks of the respective companies with which they are associated.

at the top of the Google Cloud console.

at the top of the Google Cloud console. ), select Compute Engine.

), select Compute Engine.