Overview

Query Iceberg tables in Cloud Storage with BigQuery In this lab, you'll explore how BigQuery's Lakehouse tables can query data in open-source formats directly within Cloud Storage. You'll learn how to create a Lakehouse table that points to an existing Iceberg table, enabling you to analyze data without moving or duplicating it. This powerful feature allows you to maintain a single source of truth in an open format while leveraging BigQuery's high-performance analytics engine. This hands-on experience is a cornerstone of a modern data lakehouse, where you can unify your data regardless of its format or location.

You'll start by creating a connection that allows BigQuery to securely access data in Cloud Storage. Then, you'll define a Lakehouse table over a sample Iceberg table that contains raw web log data. Iceberg is an open table format designed for huge analytic datasets, and it's often used with processing engines like Apache Spark.

Finally you will run a query that seamlessly joins the Iceberg data in Cloud Storage with a native BigQuery table containing customer information. By mastering these technique, Cymbal E-commerce can answer critical business questions that span different data sources. For example: "Which products are most frequently viewed by our highest-value customers right before they make a purchase?"

What you'll do

- Create an Iceberg table from a CSV file in Cloud Storage using BigQuery and Lakehouse.

- Query the Iceberg table to analyze data stored in Cloud Storage.

- Join the Lakehouse table with a native BigQuery table to combine datasets.

- Create a Cloud resource connection to Cloud Storage.

Setup and requirements

Before you click the Start Lab button

Read these instructions. Labs are timed and you cannot pause them. The timer, which starts when you click Start Lab, shows how long Google Cloud resources will be made available to you.

This hands-on lab lets you do the lab activities yourself in a real cloud environment, not in a simulation or demo environment. It does so by giving you new, temporary credentials that you use to sign in and access Google Cloud for the duration of the lab.

What you need

To complete this lab, you need:

- Access to a standard internet browser (Chrome browser recommended).

- Time to complete the lab.

Note: If you have a personal Google Cloud account or project, do not use it for this lab.

Note: If you are using a Pixelbook, open an Incognito window to run this lab.

Log in to Google Cloud Console

- Using the browser tab or window you are using for this lab session, copy the Username from the Connection Details panel and click the Open Google Console button.

Note: If you are asked to choose an account, click Use another account.

- Paste in the Username, and then the Password as prompted.

- Click Next.

- Accept the terms and conditions.

Since this is a temporary account, which will last only as long as this lab:

- Do not add recovery options

- Do not sign up for free trials

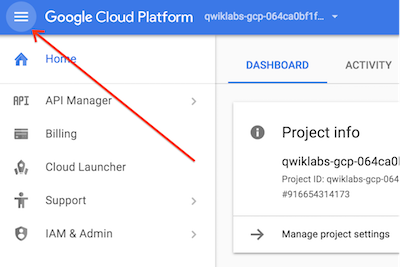

- Once the console opens, view the list of services by clicking the Navigation menu (

) at the top-left.

) at the top-left.

Verify or enable required APIs

-

In the Google Cloud console, enter BigLake API in the top search bar.

-

Click on the result for BigLake API under Marketplace.

-

If the API is not already enabled, click Enable to enable the API.

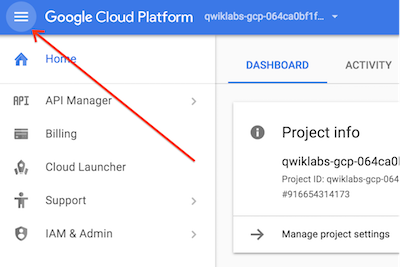

Check IAM permissions.

-

From the navigation menu select IAM & Admin and from the flyout submenu select IAM.

-

Find your entry which will be similar to student-xx-xxxxxxxxxxxx@qwiklabs.net

-

Verify you have the role BigQuery Connection Admin and BigQuery Connection User

Task 1. Create a connection to Cloud Storage

In this task, you create a new connection to Cloud Storage for BigQuery to use for reading the data in a CSV file in Cloud Storage as an external table.

Create the connection

-

In the Google Cloud console, in the Navigation menu ( ), navigate to BigQuery > Studio.

), navigate to BigQuery > Studio.

-

On the top left pane, click Explorer and click + Add data.

-

For Data Source Type select Storage/Data Lakes.

-

For Most popular data sources click the card for Google Cloud Storage.

-

In the resulting selection of cards for Access external data in place click on External or Lakehouse Table.

-

In the Create table screen use the following values:

| Property |

Value |

| Create table from |

Google Cloud Storage |

| Select file from GCS bucket or use a URI pattern |

cloud-training/OCBL462/cymbal_synthetic_weblog_data.csv |

| File format |

CSV |

| Source Data Partitioning |

Leave un-checked |

| Project |

{{{project_0.project_id | Project ID}}} |

| Dataset |

Click Dataset, from Loaded datasets select

cymbal_lake |

| Table |

web_log |

| Table type |

External table |

| Create a Lakehouse table using a Cloud resource connection |

Check the box for this item |

| Click the Connection ID drop down and select Create a new Cloud resource connection

|

| Connection type |

Vertex AI remote models, remote functions and Lakehouse and Spanner (Cloud Resource) |

| Connection ID |

gcs-bucket-{{{project_0.project_id | Project ID}}}_eds |

| Location type |

Region |

| Region |

{{{project_0.default_region | Region}}} |

| Friendly name |

Leave blank |

| Description |

Leave blank |

| Click Create connection

|

|

| Schema, Auto detect |

Check the box for this item |

- Leave everything else at the defaults and click Create table

To verify the objective, click Check my progress.

Create a connection to Cloud Storage

Task 2. Create and load the Iceberg table in Cloud Storage with BigQuery

In this task you ingest the data from the external table you just created and load it into Iceberg format in a Cloud Storage bucket.

-

In the Google Cloud console, in the Navigation menu ( ), navigate to BigQuery > Studio.

), navigate to BigQuery > Studio.

-

If not already open click Classic Explorer and expand your project. It will be similar to qwiklabs-gcp-xx-xxxxxxxxxxxx.

-

Expand the entry for cymbal_lake.

-

Click the 3 vertical dots to the right of the entry for web_log. Select Query from the popup menu.

-

Close the reference panel by clicking the X in the top right corner.

-

Replace the SQL query with the following code:

CREATE TABLE cymbal_lake.iceberg_web_log

WITH CONNECTION `projects/{{{project_0.project_id | Project ID}}}/locations/{{{project_0.default_region | Region}}}/connections/gcs-bucket-{{{project_0.project_id | Project ID}}}_eds`

OPTIONS (

table_format = 'ICEBERG',

storage_uri = 'gs://gcs-bucket-{{{project_0.project_id | Project ID}}}') AS

SELECT * FROM `cymbal_lake.web_log`;

-

Click the Run button. After processing you will get an error message. Don't close it as you need the BigQuery service account ID from it to set the proper permission. The error message will be like this example:

Access Denied: BigQuery BigQuery: Permission denied while writing data. bqcx-542604010272-n24h@gcp-sa-bigquery-condel.iam.gserviceaccount.com does not have storage.objects.create access to the Google Cloud Storage object. Permission 'storage.objects.create' denied on resource (or it may not exist). Please make sure gs://gcs-bucket-qwiklabs-gcp-00-333263c4c0ba/data/46d259a5-5da4-4e66-afa8-ae849d1bef50-17da16d07142b039-f-00000-of-00001.parquet is accessible via appropriate IAM roles, e.g. Storage Object Viewer or Storage Object Creator

-

Copy the user ID from the error message. It will be in this format bqcx-xxxxxxxxxxxx-xxxx@gcp-sa-bigquery-condel.iam.gserviceaccount.com

-

In the Google Cloud console, in the Navigation menu ( ), navigate to Cloud Storage > Buckets.

), navigate to Cloud Storage > Buckets.

-

Click the 3 vertical dots at the right end of the row with your bucket gcs-bucket- and select Edit access.

-

In the box Access control click the link to Switch to uniform

-

In the popup select Uniform. Click Save.

-

Click + Add principal

-

In New principal paste in the user ID you copied from the BigQuery error message.

-

Click Select a role *

-

In the Filter enter Storage Object User and select Storage Object User from the resulting list, click Save and then click Update.

-

Return to BigQuery and rerun the create table query by repeating Task 2 step 6.

To verify the objective, click Check my progress.

Create and load the Iceberg table in Cloud Storage with BigQuery

Task 3. Query the Iceberg table from BigQuery

You will now work with BigQuery to access the data in the Iceberg table you just created in Cloud Storage.

-

In the Google Cloud console, in the Navigation menu ( ), navigate to BigQuery > Studio.

), navigate to BigQuery > Studio.

-

If not already open expand the entry in Classic Explorer for your project. It will appear like qwiklabs-gcp-xx-xxxxxxxxxxxx.

-

Expand the entry for cymbal_lake.

-

Click the 3 vertical dots to the right of the entry for iceberg_web_log. Select Query from the popup menu.

-

Close the reference panel by clicking the X in the top right corner.

-

Replace the SQL query with the following code:

SELECT * FROM cymbal_lake.iceberg_web_log LIMIT 1000;

-

Click the Run button. The returned data is from your Iceberg table in Cloud Storage!

-

Replace the SQL query with the following code:

SELECT * FROM cymbal_lake.iceberg_web_log AS WL

INNER JOIN customers.customer_details AS CD

ON WL.customer_id = CD.id

LIMIT 1000;

-

Click the Run button. The returned data is from your Iceberg table in Cloud Storage joined to customer data in a native BigQuery table!

To verify the objective, click Check my progress.

Query the Iceberg table from BigQuery

Congratulations!

You configured an external table in BigQuery to access data from a CSV file in Cloud Storage. You then created an Iceberg table in Cloud Storage and populated it with data from the CSV file by using BigQuery. Finally, you ran a query combining data in the Iceberg table with data in a native BigQuery table. While this was a simple example, it demonstrates the power and flexibility that Cymbal's BigQuery and Lakehouse provides to turn data into a powerful business tool.

) at the top-left.

) at the top-left.