GSP1341

Note: Click Start Lab before continuing past this point, as the startup script for this lab takes about 20 minutes to complete. In the meantime, you can review the lab instructions to get an overall sense of the workflow.

Overview

Ready to begin the journey to achieve zero-downtime database modernization and migration across different database options? You can now conquer heterogeneous migration with Database Migration Service!

In this lab, you learn how to bypass legacy toolchains and dive straight into the efficient, automated workflow of the Database Migration Service. Specifically, you execute a heterogeneous migration, moving from proprietary T-SQL to open-source PostgreSQL, leveraging Google's integrated AI for maximum speed. You learn how to use the Database Migration Service Conversion Workspace, which is critical for overcoming heterogeneous compatibility issues, providing explainability, and enabling user-guided fixes. It turns weeks of manual effort into hours of targeted review. Last, you run a continuous migration job and ensure minimal downtime by handling the full data load and then maintaining real-time synchronization until the final, safe cutover.

For this lab environment, the following infrastructure has been created using Terraform: a Cloud SQL for SQL Server source, an AlloyDB for PostgreSQL destination, and secure Private Connectivity (VPC Peering/Private Service Connect, PSC) between them. This allows you to focus entirely on the critical migration data flow, conversion, and validation steps.

If you have not already, click Start Lab to initiate the Terraform process (completes in 20 minutes), and in the meantime, you can review the lab instructions to get an overall sense of the workflow.

What you'll do

In this lab, you migrate a database schema and data from Cloud SQL for SQL Server to an AlloyDB for PostgreSQL cluster using the Database Migration Service Conversion Workspace (including code remediation) and a continuous migration job.

- Use AlloyDB Studio to configure the AlloyDB for PostgreSQL destination database for Database Migration Service migration jobs.

- Use Cloud SQL Studio to verify the source database schema in Cloud SQL for SQL Server and configure it to support Change Data Capture (CDC) for the continuous migration job.

- Create connection profiles for the source and destination instances for use by Database Migration Service migration jobs.

- Complete AI-assisted schema conversion via the Conversion Workspace in Database Migration Service.

- Run a continuous migration job for heterogeneous migration using Database Migration Service.

Setup and requirements

Before you click the Start Lab button

Read these instructions. Labs are timed and you cannot pause them. The timer, which starts when you click Start Lab, shows how long Google Cloud resources are made available to you.

This hands-on lab lets you do the lab activities in a real cloud environment, not in a simulation or demo environment. It does so by giving you new, temporary credentials you use to sign in and access Google Cloud for the duration of the lab.

To complete this lab, you need:

- Access to a standard internet browser (Chrome browser recommended).

Note: Use an Incognito (recommended) or private browser window to run this lab. This prevents conflicts between your personal account and the student account, which may cause extra charges incurred to your personal account.

- Time to complete the lab—remember, once you start, you cannot pause a lab.

Note: Use only the student account for this lab. If you use a different Google Cloud account, you may incur charges to that account.

How to start your lab and sign in to the Google Cloud console

-

Click the Start Lab button. If you need to pay for the lab, a dialog opens for you to select your payment method.

On the left is the Lab Details pane with the following:

- The Open Google Cloud console button

- Time remaining

- The temporary credentials that you must use for this lab

- Other information, if needed, to step through this lab

-

Click Open Google Cloud console (or right-click and select Open Link in Incognito Window if you are running the Chrome browser).

The lab spins up resources, and then opens another tab that shows the Sign in page.

Tip: Arrange the tabs in separate windows, side-by-side.

Note: If you see the Choose an account dialog, click Use Another Account.

-

If necessary, copy the Username below and paste it into the Sign in dialog.

{{{user_0.username | "Username"}}}

You can also find the Username in the Lab Details pane.

-

Click Next.

-

Copy the Password below and paste it into the Welcome dialog.

{{{user_0.password | "Password"}}}

You can also find the Password in the Lab Details pane.

-

Click Next.

Important: You must use the credentials the lab provides you. Do not use your Google Cloud account credentials.

Note: Using your own Google Cloud account for this lab may incur extra charges.

-

Click through the subsequent pages:

- Accept the terms and conditions.

- Do not add recovery options or two-factor authentication (because this is a temporary account).

- Do not sign up for free trials.

After a few moments, the Google Cloud console opens in this tab.

Note: To access Google Cloud products and services, click the Navigation menu or type the service or product name in the Search field.

Task 1. Configure the destination instance (AlloyDB for PostgreSQL)

Before Database Migration Service can initiate the migration process, you need to prepare the destination AlloyDB cluster by creating a dedicated database and a user with the necessary privileges.

In this task, you use the AlloyDB Studio (query editor) in the Cloud Console to configure the user and new database both named dms.

Connect to the AlloyDB instance using AlloyDB Studio

-

In the Google Cloud Console, on the Navigation menu ( ), click View all products. Under Databases, click AlloyDB for PostgreSQL.

), click View all products. Under Databases, click AlloyDB for PostgreSQL.

-

In the AlloyDB menu, review the Clusters page to see the list of clusters including a cluster named alloydb-cluster-skillsdms.

It may take a few minutes for both the cluster and instance to be fully provisioned.

When you see a Status of Ready (green checkmark) for both the cluster named alloydb-cluster-skillsdms and the instance named alloydb-instance-skillsdms, you can proceed to the next step.

-

Click on the instance named alloydb-instance-skillsdms.

-

In the AlloyDB menu under Primary cluster, click AlloyDB Studio.

-

Provide the following details to sign in, and click Authenticate.

| Property |

Value |

| Database |

Select postgres

|

| Authentication method |

Select Built-in database authentication

|

| User |

Select dms

|

| Password |

Welcome123# |

Create the Database Migration Service user and database

Now that you are connected to the AlloyDB instance via AlloyDB Studio, you can execute the following SQL to configure a low-privilege migration user and a target database both named dms. Using a dedicated user (dms) for the migration enhances security and isolates the migration process from other database operations.

-

On the AlloyDB Studio page, click the Untitled Query tab to access the query window.

-

To create the destination database named dms and automatically assign ownership to the user named dms, copy and paste the following query in the query window, and click Run.

CREATE DATABASE dms;

When the query has executed successfully, you see a message that says Statement executed successfully.

Click Check my progress to verify the objective.

Configure the destination instance (AlloyDB for PostgreSQL)

Task 2. Configure the source instance (Cloud SQL for SQL Server)

In this task, you first confirm the presence of the schema and data that Database Migration Service will read and migrate. This step ensures the source is accessible and contains the objects expected for migration (e.g., tables like customers, stored procedures). Then, you configure the Cloud SQL for SQL Server instance to support Change Data Capture (CDC) for continuous migration.

Verify the source database schema in Cloud SQL for SQL Server

In this section, you review some data in the Cloud SQL for SQL Server instance to get an overview of the data that is being migrated. In a later task for the migration job, you run similar queries to verify the row count for these same tables in the destination instance.

-

In the Google Cloud Console, on the Navigation menu ( ), click Cloud SQL.

), click Cloud SQL.

-

Click on the instance ID called mssql-instance-skillsdms.

-

In the Cloud SQL menu under Primary instance, click Cloud SQL Studio.

-

Provide the following details to sign in, and click Authenticate.

| Property |

Value |

| Database |

Select dms

|

| User |

Select sqlserver

|

| Password |

Welcome123# |

-

On the Cloud SQL Studio page, click the Untitled Query tab to access the query window.

-

To run a simple query to confirm data presence, copy and paste the following query in the query window, and click Run.

SELECT COUNT(*) FROM

"dbo"."Categories";

The returned number of rows is 8.

- Repeat step 6 for a second query of the data:

SELECT COUNT(*) FROM

"dbo"."Customers";

The returned number of rows is 91.

Configure the Cloud SQL for SQL Server instance to support Change Data Capture (CDC)

In this section, you configure the Cloud SQL for SQL Server instance to support Change Data Capture (CDC), which is a process to continuously read updated data from a source data instance and write these changes to a destination data instance in real time. CDC begins with a full data dump from the source to the destination, similar to a one-time migration. With CDC enabled, any additional updates in the source (after the initial data dump) are sent to the destination immediately (in this example, AlloyDB for PostgreSQL).

Note: For one-time migration jobs, you do not need to configure the source instance (in this example, Cloud SQL for SQL Server) to support Change Data Capture (CDC). A one-time migration job completes a full data dump with no additional changes sent from the source to the destination instance.

-

In the Cloud SQL Studio query window, click Clear to remove the previous query text.

-

To configure the instance to support CDC for all tables, copy and paste the following query in the query window, and click Run.

EXEC msdb.dbo.gcloudsql_cdc_enable_db 'dms';

EXEC sys.sp_cdc_enable_table @source_schema = N'dbo', @source_name = N'Employees', @role_name = N'CDC';

EXEC sys.sp_cdc_enable_table @source_schema = N'dbo', @source_name = N'Categories', @role_name = N'CDC';

EXEC sys.sp_cdc_enable_table @source_schema = N'dbo', @source_name = N'Customers', @role_name = N'CDC';

EXEC sys.sp_cdc_enable_table @source_schema = N'dbo', @source_name = N'Shippers', @role_name = N'CDC';

EXEC sys.sp_cdc_enable_table @source_schema = N'dbo', @source_name = N'Suppliers', @role_name = N'CDC';

EXEC sys.sp_cdc_enable_table @source_schema = N'dbo', @source_name = N'Orders', @role_name = N'CDC';

EXEC sys.sp_cdc_enable_table @source_schema = N'dbo', @source_name = N'Products', @role_name = N'CDC';

EXEC sys.sp_cdc_enable_table @source_schema = N'dbo', @source_name = N'Order Details', @role_name = N'CDC';

EXEC sys.sp_cdc_enable_table @source_schema = N'dbo', @source_name = N'CustomerCustomerDemo', @role_name = N'CDC';

EXEC sys.sp_cdc_enable_table @source_schema = N'dbo', @source_name = N'CustomerDemographics', @role_name = N'CDC';

EXEC sys.sp_cdc_enable_table @source_schema = N'dbo', @source_name = N'Region', @role_name = N'CDC';

EXEC sys.sp_cdc_enable_table @source_schema = N'dbo', @source_name = N'Territories', @role_name = N'CDC';

EXEC sys.sp_cdc_enable_table @source_schema = N'dbo', @source_name = N'EmployeeTerritories', @role_name = N'CDC';

Identify the internal IP address for the Cloud SQL for SQL Server instance

In this section, you identify the internal IP for the Cloud SQL for SQL Server instance, which you need to create the source connection profile used by the migration job.

-

Hover over the Cloud SQL icon ( ) on the top left to see the Cloud SQL menu options.

) on the top left to see the Cloud SQL menu options.

-

In the Cloud SQL menu under Primary instance, click Connections.

-

In the Summary tab, copy the Internal IP address (for example, 10.134.0.3) for use in the next task.

Task 3. Configure connection profiles for the source and destination instances

Connection profiles are persistent entities in Database Migration Service that securely store connectivity information. They can be reused across Conversion Workspaces and migration jobs.

In this task, you configure Connection Profiles for the source (Cloud SQL for SQL Server) and destination (AlloyDB for PostgreSQL) instances. You begin with creating the destination connection profile first to allow time for the Private Service Connect option to fully provision before you specify it in the source connection profile.

Create the destination connection profile (AlloyDB for PostgreSQL)

-

In the Google Cloud Console, on the Navigation menu ( ), click View all products. Under Databases, click Database Migration.

), click View all products. Under Databases, click Database Migration.

-

In the Database Migration menu (left), click Connection profiles.

-

Click Create profile.

-

For Source engine, under the section for SQL Server, select Cloud SQL for SQL Server.

-

For Destination engine, select AlloyDB for PostgreSQL.

-

For Choose the profile type to create, select Destination.

-

Enter the required information for connection profile name and ID:

| Property |

Value |

| Connection profile name |

alloydb-destination-profile |

| Connection profile ID |

Keep the auto-generated value |

| Region |

|

- Under Connection details, enter the required information:

| Property |

Value |

| Cluster ID |

In the dropdown menu, select alloydb-cluster-skillsdms. |

| Hostname or IP address |

Click in the box to see the available values, and select the Public IP (for example, 34.21.12.174 or 136.111.93.38), not the internal IP (for example, 10.133.1.2) |

| Port |

5432 |

| Username |

dms |

| Password |

Welcome123# |

-

Click Continue

-

For Connectivity method, select Public IP.

-

Click Run Test to test the configuration settings.

Note: It can take a few minutes for the test to complete. After the test is completed successfully, you see a message that Your connection profile test was successful.

- After you receive the message that

Your connection profile test was successful, click Create.

A new connection profile named alloydb-destination-profile appears in the Connection profiles list.

Create the source connection profile (Cloud SQL for SQL Server)

-

On the Connection profiles page, click Create Profile to create a new connection profile.

-

For Source engine, under the section for SQL Server, select Cloud SQL for SQL Server.

-

For Destination engine, select AlloyDB for PostgreSQL.

-

For Choose the profile type to create, select Source.

-

Enter the required information for connection profile name and ID:

| Property |

Value |

| Connection profile name |

sqlserver-source-profile |

| Connection profile ID |

Keep the auto-generated value |

| Region |

|

- Under Define connection configurations, click Edit, and enter the required information:

| Property |

Value |

| Hostname or IP address |

Enter the internal IP for the Cloud SQL for SQL Server source instance that you copied in the previous task (for example, 10.134.0.3) |

| Port |

1433 |

| Username |

sqlserver |

| Password |

Welcome123# |

| Database |

dms |

-

For Encryption Type, select None.

-

For Connectivity method, select Private connectivity (VPC peering).

-

For Private connectivity configuration, select dms-pc.

Note: It can take 5 to 10 minutes for the connection named dms-pc to show up in the drop down menu for Private connectivity configuration. Until then, you see a message that says there is no connection created in the current region.Please exit and re-enter the Edit window (step 6) until you see the dms-pc in the dropdown menu for Private connectivity configuration.

-

Click Save.

-

Under Test connection profile, click Run test to test the configuration settings.

Note: It can take a few minutes for the test to complete. After the test is completed successfully, you see a message that Your connection profile test was successful.

- After you receive the message that

Your connection profile test was successful, click Create.

A new connection profile named sqlserver-source-profile appears in the Connection profiles list.

Click Check my progress to verify the objective.

Configure connection profiles for the source and destination instances

Task 4. Complete database conversion using Conversion Workspace

The Conversion Workspace is the core tool for heterogeneous migration with Database Migration Service, enabling schema and code translation from proprietary T-SQL syntax to open-source PostgreSQL. The built-in auto-conversion feature leverages Google's AI model named Gemini to automatically apply fixes to common, complex code conversion issues that the deterministic engine struggles with (e.g., specific CURSOR or XML handling), significantly reducing manual effort.

In this task, you create and auto-convert a workspace, review conversion issues, and apply the converted schema to the destination database in AlloyDB for PostgreSQL.

Create the conversion workspace

-

In the Database Migration menu (left), select Conversion workspaces.

-

Click Set up workspace, and then enter the required information:

| Property |

Value |

| Conversion workspace name |

sqlserver-to-alloydb-workspace |

| Conversion workspace ID |

Keep the auto-generated value |

| Region |

|

| Source |

Select Cloud SQL for SQL Server. |

| Destination |

Select AlloyDB for PostgreSQL. |

- Under Enable Gemini settings for your workspace, enable the following two Gemini options:

- Conversion assistance

- Pattern matching

You are not enabling auto-conversion at this time, as you will explore that Gemini option later in the lab.

-

Click Create workspace & continue.

-

When prompted, click Enable for Gemini for Google Cloud API.

-

On the Define source and pull schema snapshot tab, for Source connection profile, select sqlserver-source-profile.

-

Click Pull schema snapshot & continue.

Note: It can take a few minutes for the pull to complete. After the pull is successfully completed, you see a new page section titled Select and convert objects.

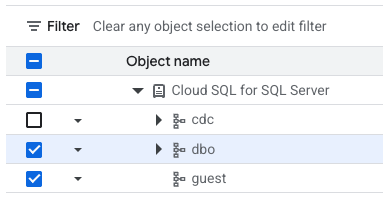

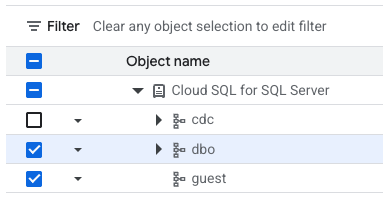

- On the Select and convert objects page, select dbo and guest by clicking in the Filter checkbox next to the specific Object name.

All object names except cdc now have enabled checkboxes next to the name.

- Click Convert & continue.

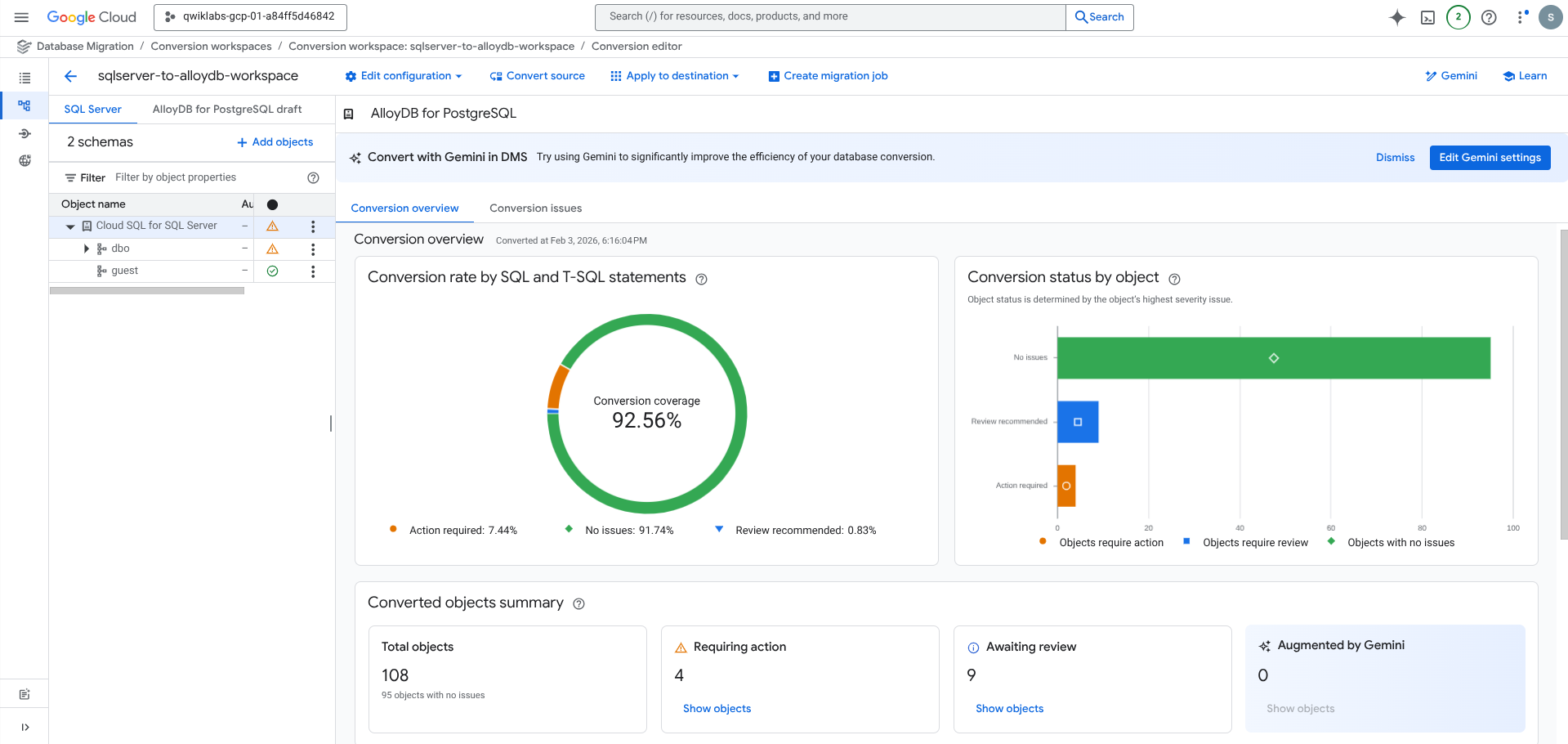

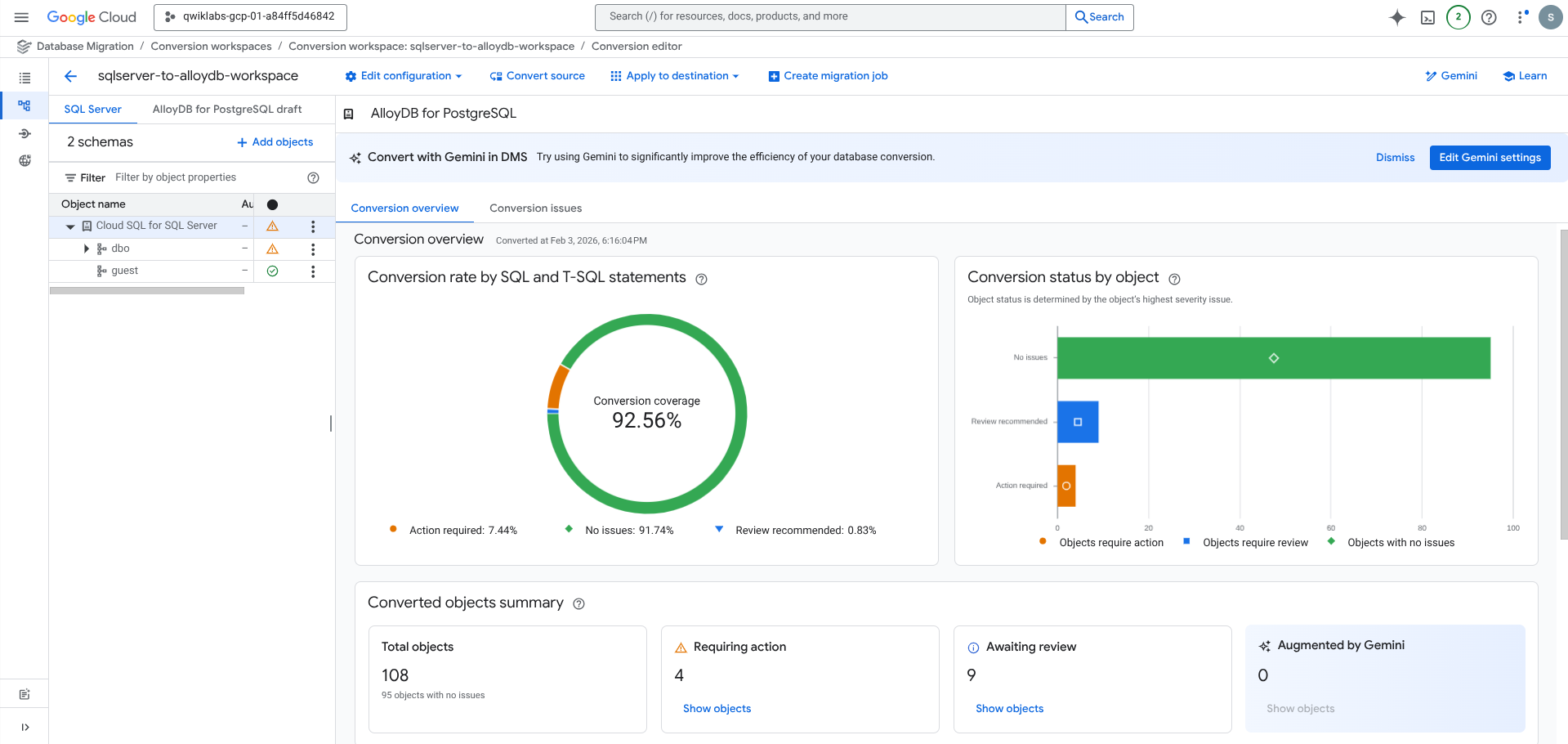

Note: It can take a few minutes for the conversion to run. When the conversion is ready for review, you see a Conversion coverage percentage (for example, 86.78%) under Conversion rate by SQL and T-SQL statements on the Conversation overview page.

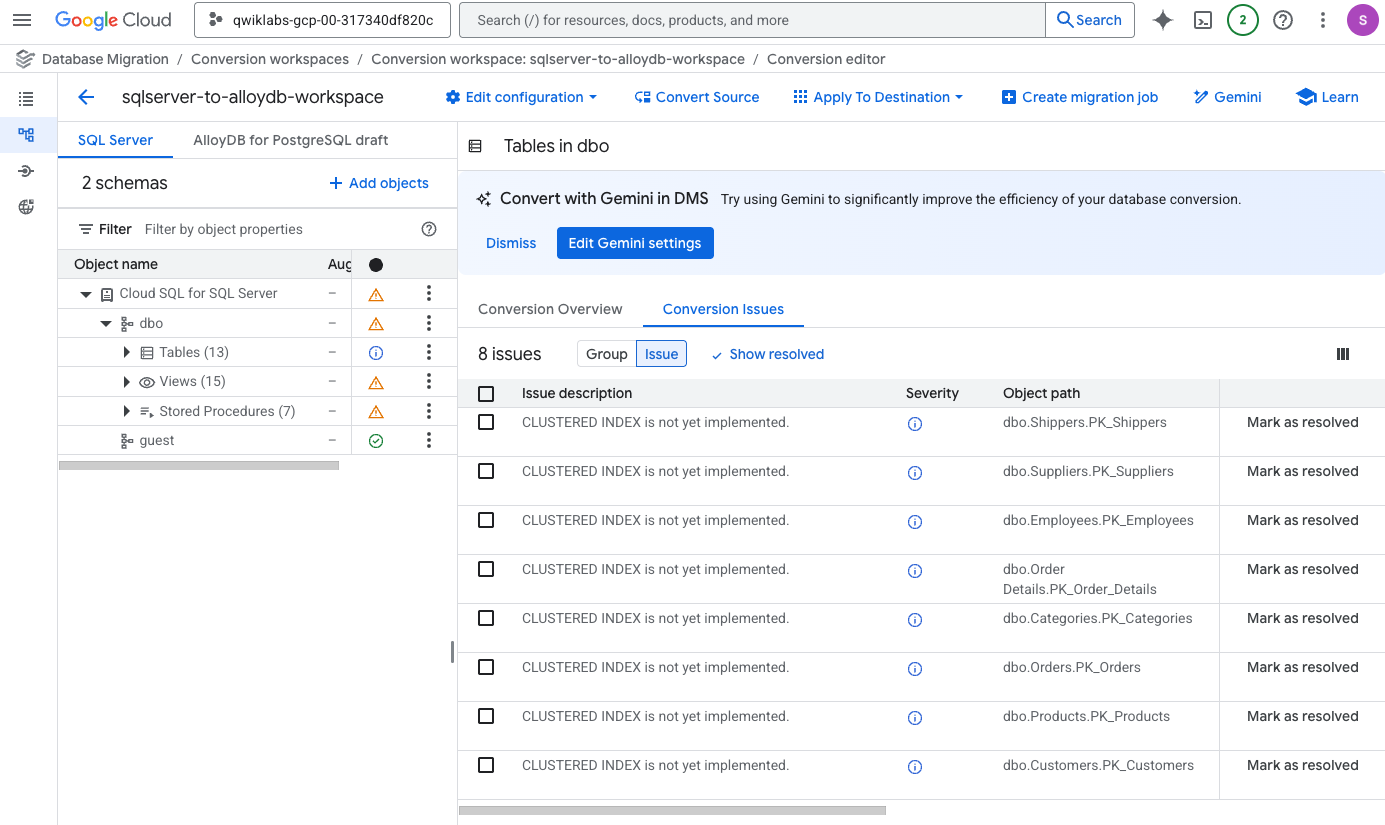

Review and address conversion issues for tables

In this section, you review the conversion issues for tables identified by the tool.

-

In the left menu, under Object name, expand the dropdown arrow next to dbo to see the list of options.

-

Click on Tables.

Notice that the Conversion coverage updates to a new value (for example, 90.7%).

-

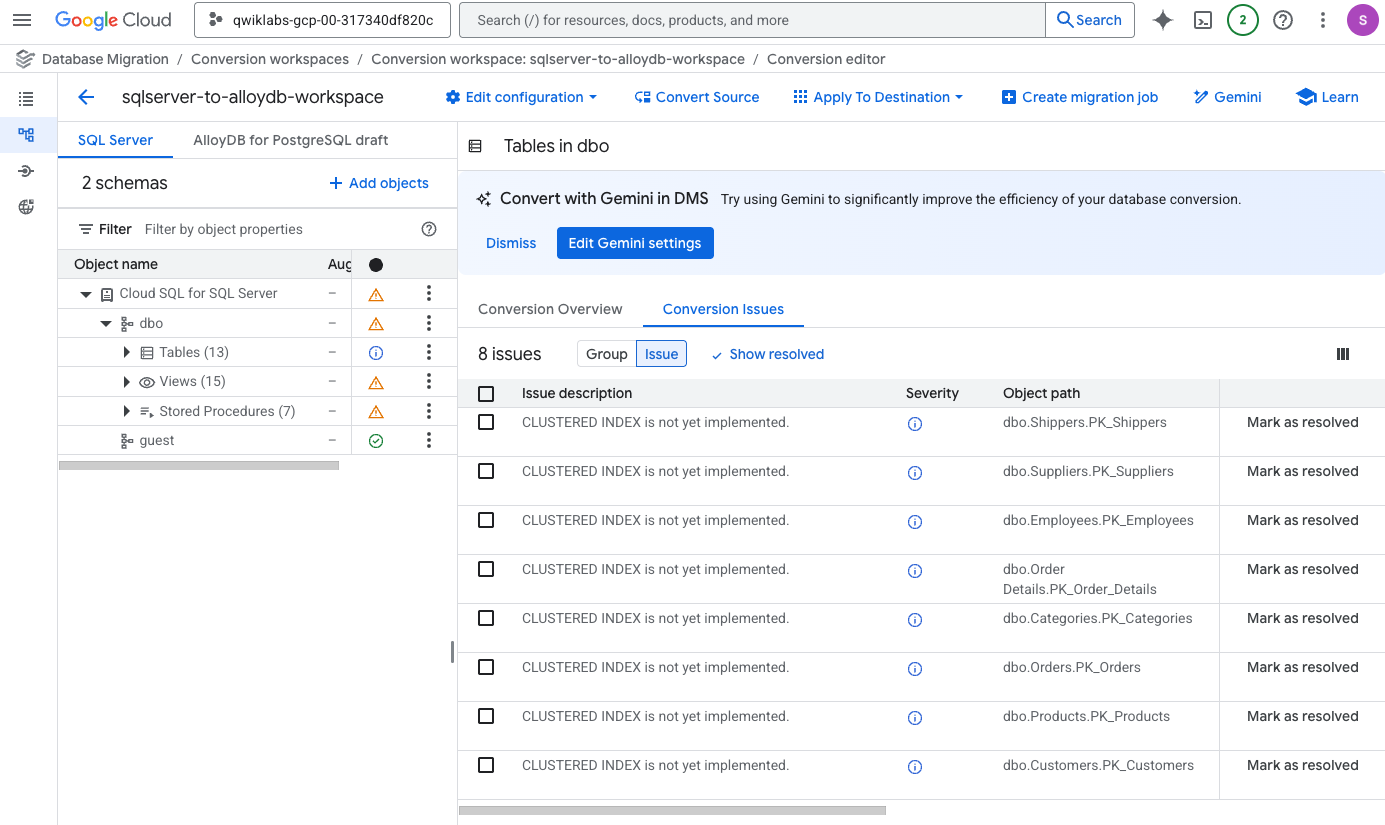

On the main details pane for Tables in dbo, click on the Conversion Issues tab (next to Conversion Overview).

-

In the Conversion Issues table, click on the tab named Issue (next to Group).

The primary recurring issue for tables is CLUSTERED INDEX is not yet implemented. This is due to the fact that the indexing options are different between SQL Server and PostgreSQL; in particular, PostgreSQL does not use clustered indexes.

-

Click on the checkbox next to Issue description to select all eight of the issues, and click Mark as resolved.

-

When prompted, click on Mark all resolved to confirm.

Note: In a production environment, you should review all identified issues carefully. In this lab environment, the identified issues are not blockers for the conversion process, so you can safely proceed with the workflow by marking all issues as resolved.

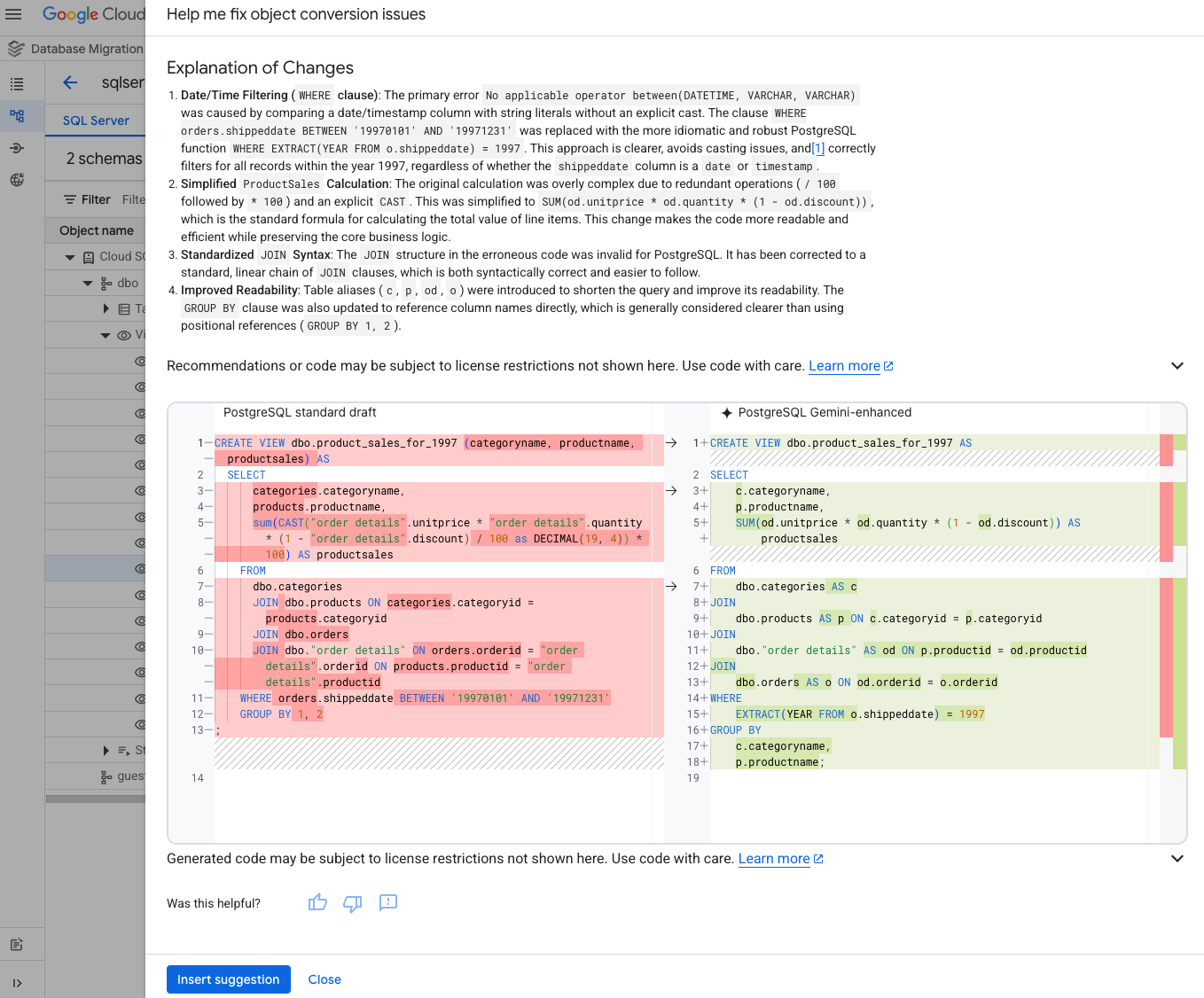

Review and address conversion issues for views

In this section, you review the conversion issues for a view identified by the tool and use the interactive editor to fix incompatible code.

-

In the left menu, under Object name, expand the dropdown arrow next to View to see the list of options.

-

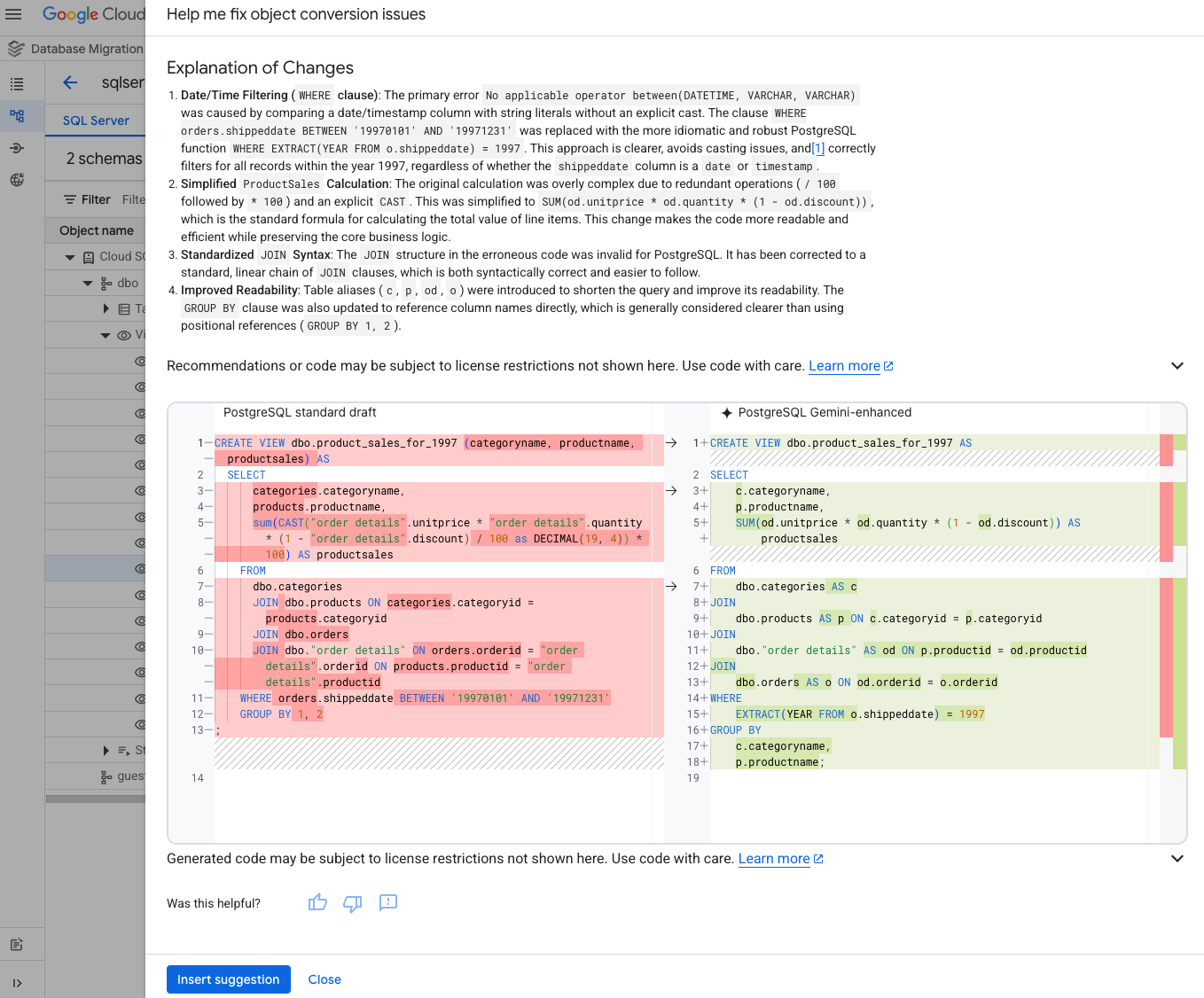

Click on the name for a view that has an issue identified, such as the view named Product_Sales_for_1997.

dbo.Product_Sales_for_1997 is a view in SQL Server that contains the product sales data for the year 1997.

Notice that the original code for SQL Server is provided in the left window, and a starting point for the code in PostgreSQL is provided in the right window. In the next steps, you use Gemini to finalize the code.

- Expand the dropdown arrow next to Assist, and click Help me fix object conversion issues.

Note: If you do not see the option for Help me fix object conversion issues, return to step 2 above, and select a different view name that has an issue identified with the warning status (orange warning triangle icon) to the right of the view name.

Note: After you click Help me fix object conversion issues, it can take a few minutes for the conversion suggestions to generate.

- Review the explanation of the changes, and then click Insert suggestion.

-

Above the code pane for AlloyDB for PostgreSQL draft (right window), click Save your changes represented by the floppy disk icon ( ) to the right of Assist.

) to the right of Assist.

-

When prompted, click Save changes to confirm.

This view has been converted successfully. In an upcoming section, you enable auto-conversion with Gemini, so that the other views can also be converted.

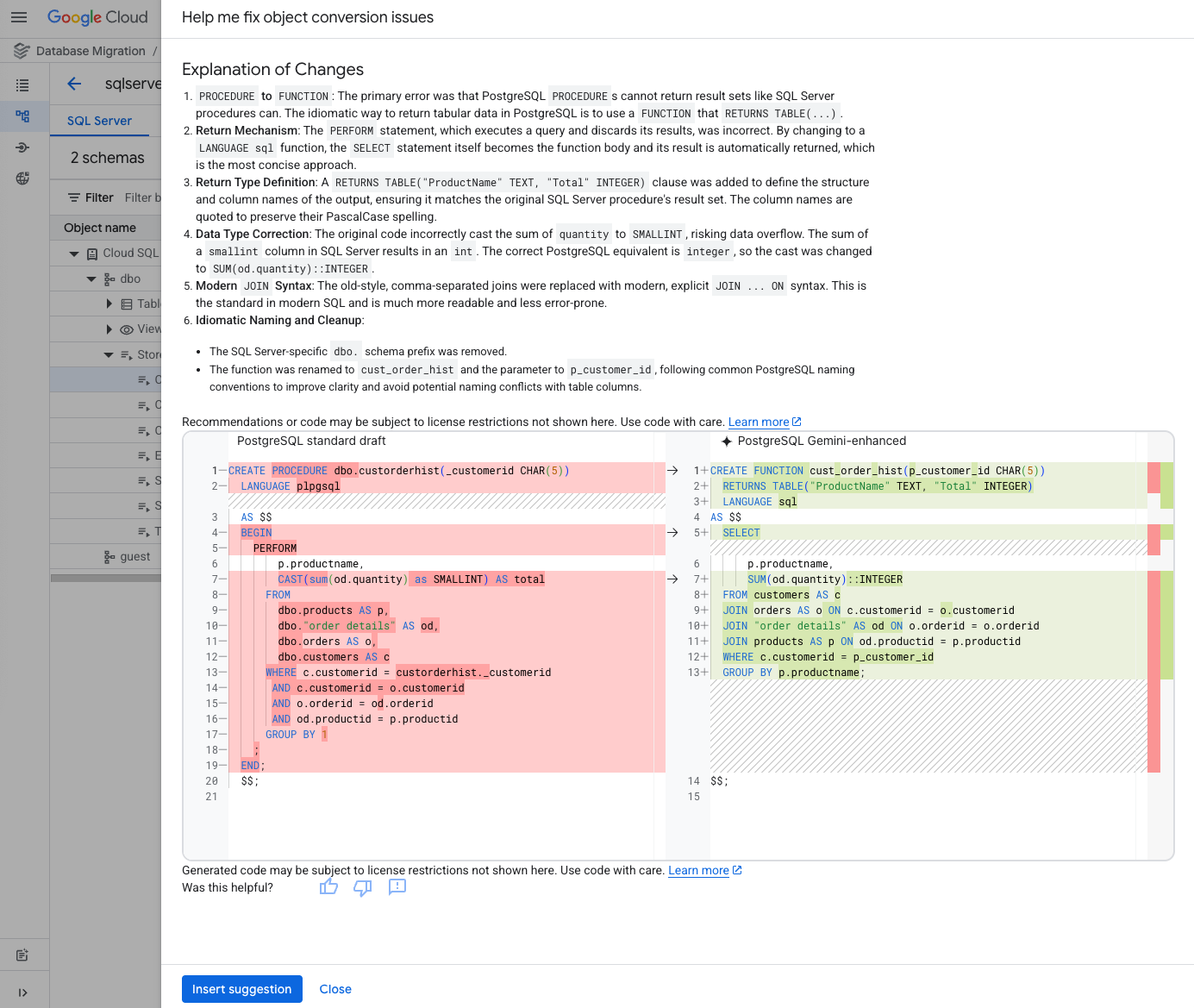

Review and address conversion issues for stored procedures

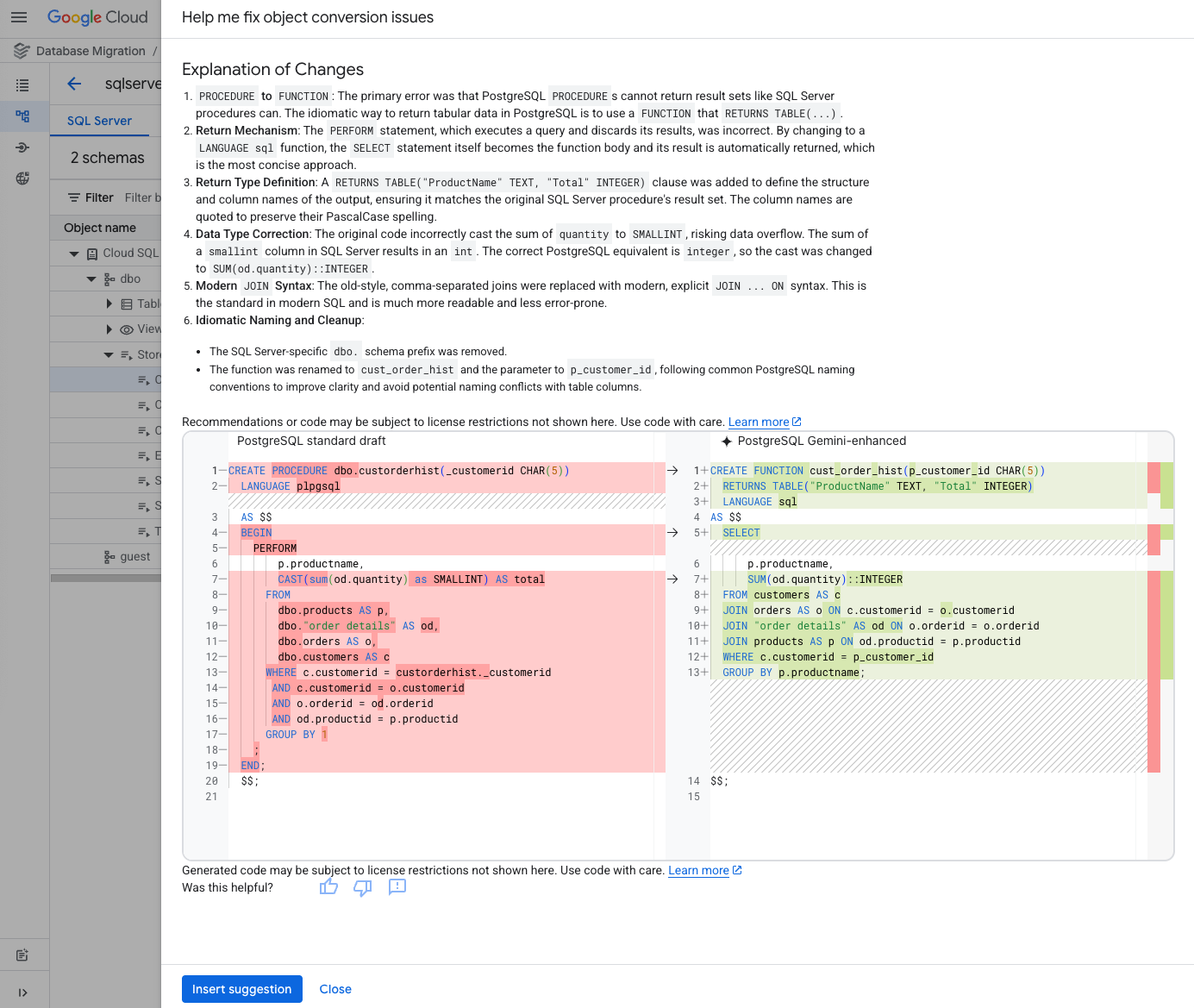

In this section, you review the conversion issues for a stored procedure identified by the tool and use the interactive editor to fix incompatible code.

-

In the left menu, under Object name, expand the dropdown arrow next to Stored Procedures to see the list of options.

-

Click on the name for a stored procedure that has an issue identified, such as the stored procedure named CustOrderHist or Employee_Sales_by_Country.

CustOrderHist is a stored procedure in SQL Server that returns a set of results from the order history.

Again, notice that the original code for SQL Server is provided in the left window, and a starting point for the code in PostgreSQL is provided in the right window. In the next steps, you use Gemini to finalize the code.

- Expand the dropdown arrow next to Assist, and click Help me fix object conversion issues.

Note: If you do not see the option for Help me fix object conversion issues, return to step 2 above, and select a different stored procedure name that has an issue identified with the warning status (orange warning triangle icon) to the right of the stored procedure name.

Note: After you click Help me fix object conversion issues, it can take a few minutes for the conversion suggestions to generate.

- Review the explanation of the changes, and then click Insert suggestion.

-

Above the code pane for AlloyDB for PostgreSQL draft (right window), click Save your changes represented by the floppy disk icon ( ) to the right of Assist.

) to the right of Assist.

-

When prompted, click Save changes to confirm.

This stored procedure has been converted successfully.

Enable auto-conversion with Gemini

In the next steps, you enable auto-conversion with Gemini, so that all stored procedures and views from the previous sections can be converted.

-

Above the code pane for AlloyDB for PostgreSQL draft, click Gemini to edit the Gemini settings.

-

Enable the option for Auto-conversion, and click Save settings.

-

When prompted, click Convert to confirm.

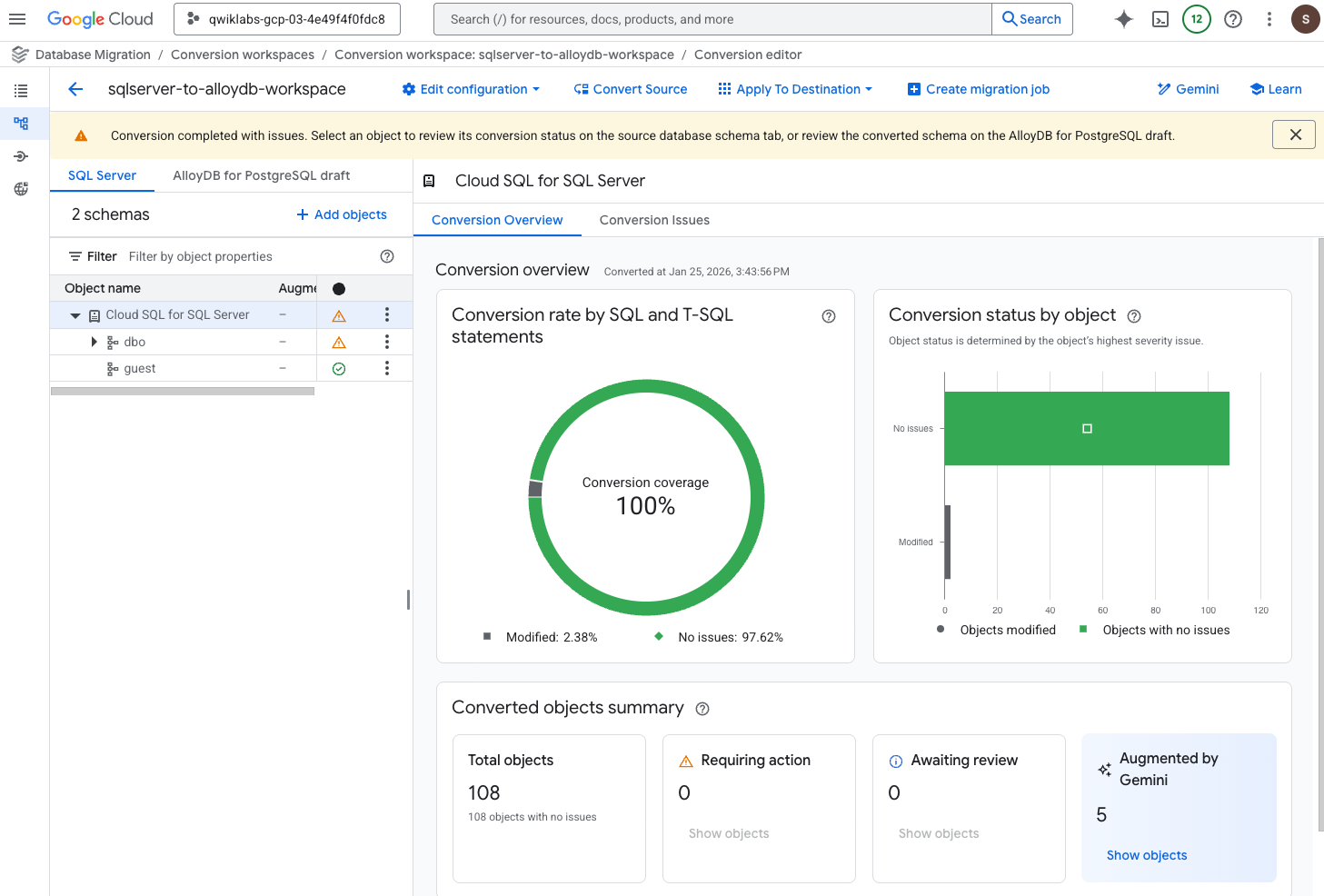

Note: It can take a few minutes for the other views and stored procedures to be updated. If you see a warning that Conversion completed with issues, you can safely ignore this warning and move on to the next steps.

-

Click on the checkbox next to Issue category and group to select all the issue groups, and click Mark all resolved.

-

When prompted, click on Mark all resolved to confirm.

Note: In a production environment, you should review all identified issues carefully. In this lab environment, the identified issues are not blockers for the conversion process, so you can safely proceed with the workflow by marking all issues as resolved.

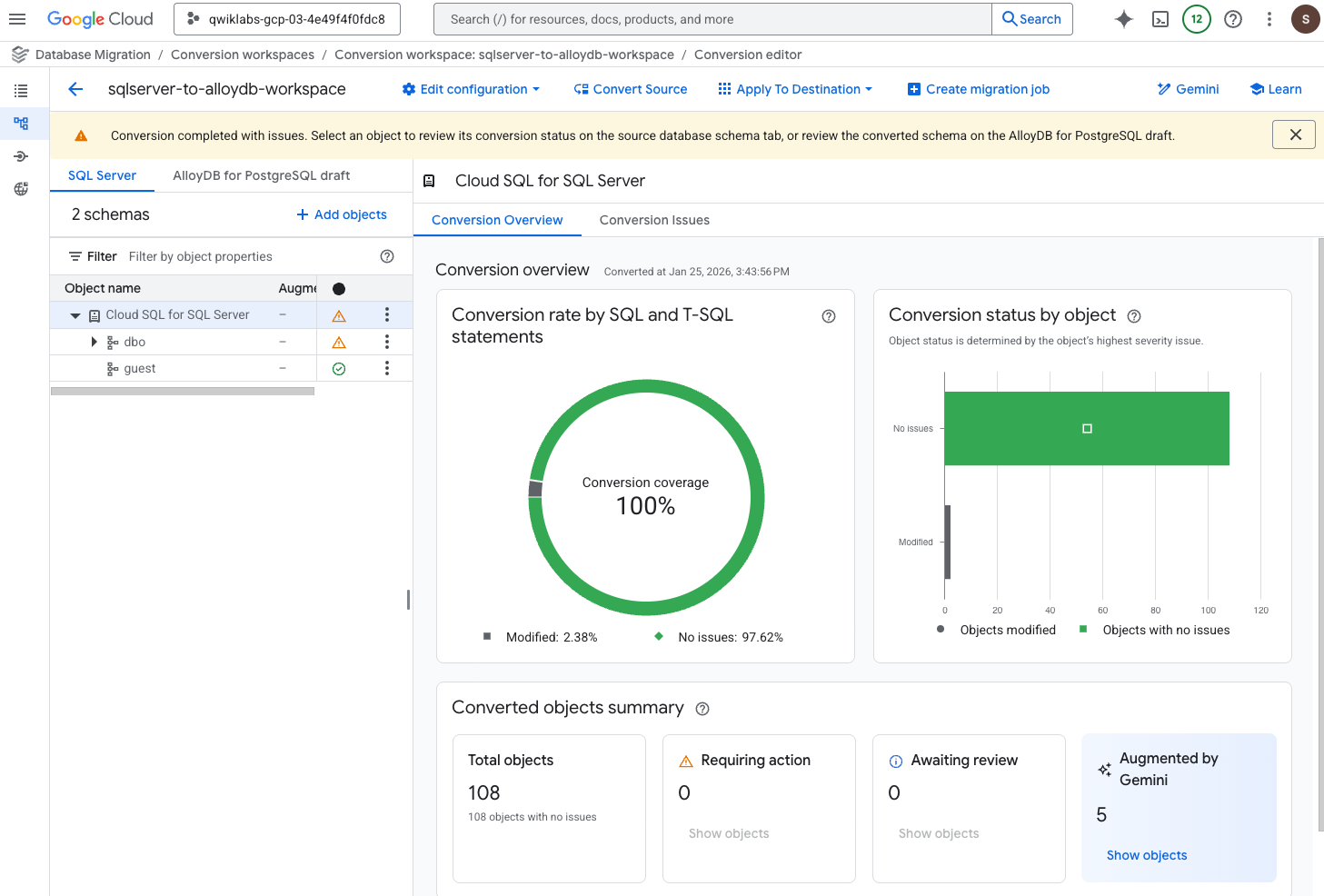

Confirm conversion coverage

-

Click on Conversion overview (next to Conversion Issues).

-

In the left menu, under Object name, click Cloud SQL for SQL Server.

Notice that the conversion coverage is now 100%.

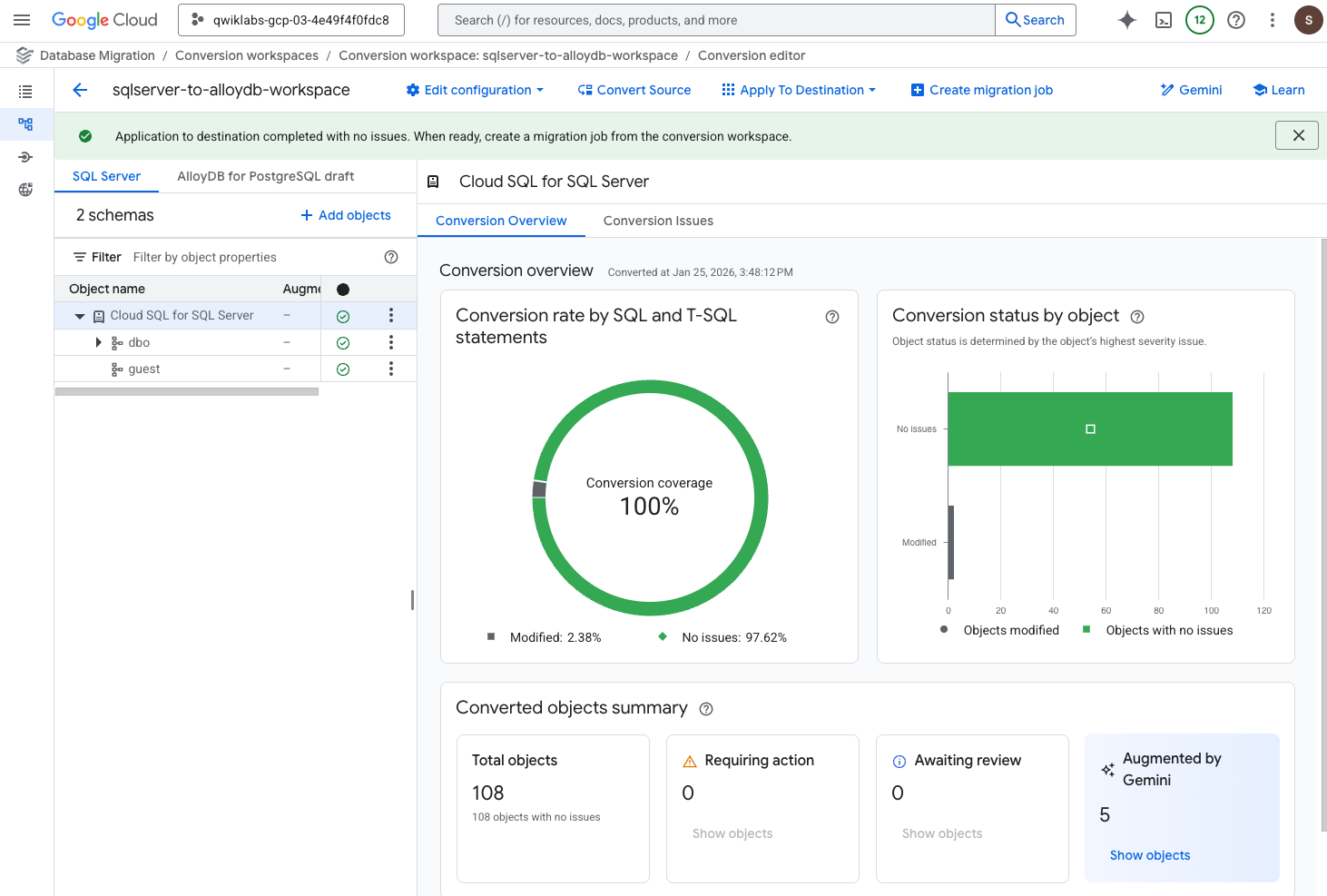

Apply the converted schema to AlloyDB for PostgreSQL

After completing the previous sections, you are now ready to create the schema and the code in the destination database (the Apply operation).

- On the Conversion editor page, on the top menu bar, click Apply To Destination > Apply.

Notice in this step, you are skipping the test of this configuration. In an actual production environment, it is recommended to test before applying it to the destination.

-

For Destination Connection Profile, select alloydb-destination-profile.

-

Click Define & continue.

Notice in this step, you are skipping the test of this configuration again. In an actual production environment, it is recommended to test before continuing.

- Under Review objects and apply conversion to destination, select all the objects by clicking in the Filter checkbox next to Object name.

All object names now have enabled checkboxes next to the name.

-

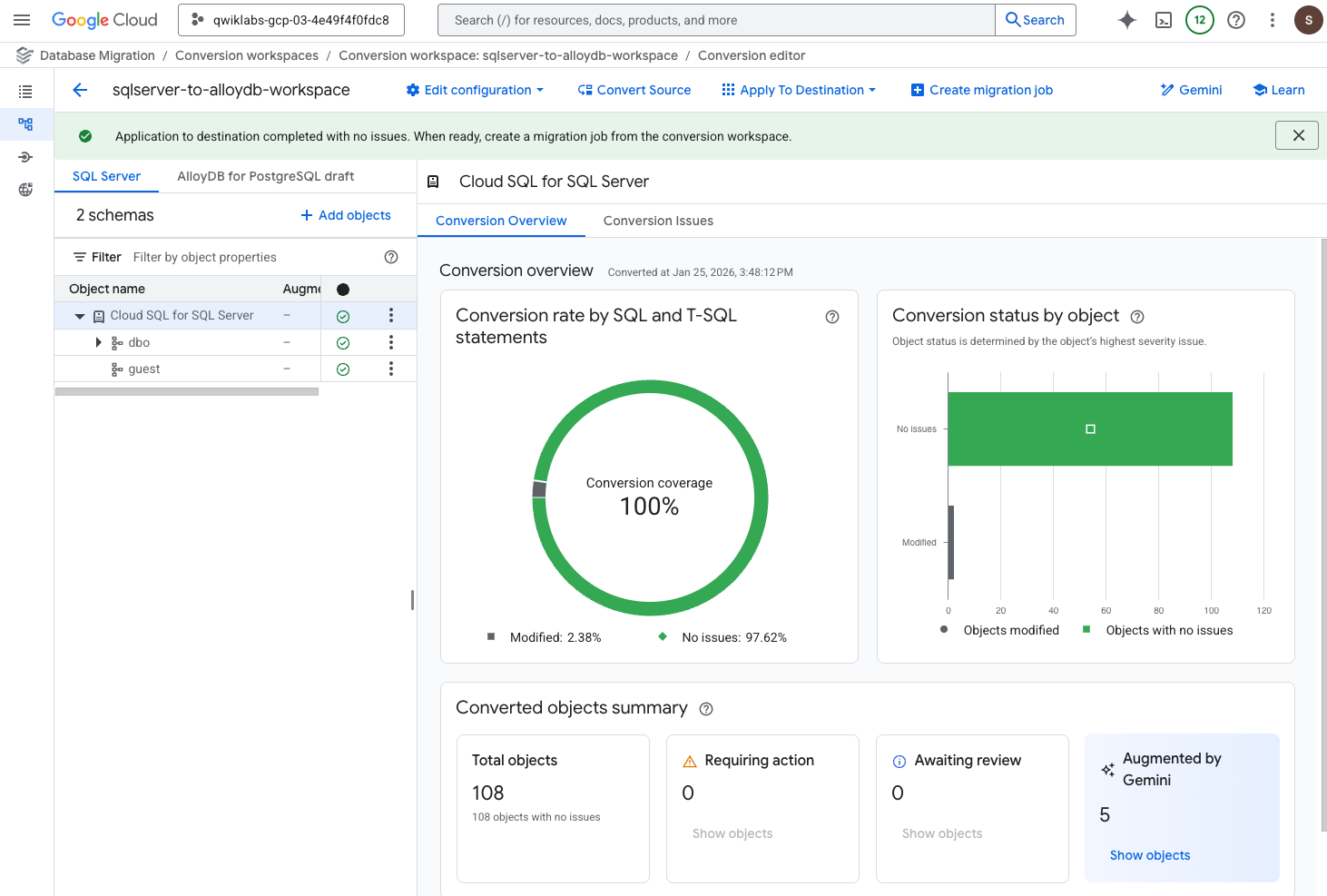

Click Apply To Destination.

-

When prompted, click Apply to confirm.

Note: It can take a few minutes for the conversion to be applied to the destination. When it has completed successfully, you see a message stating Application to destination completed with no issues. When ready, create a migration job from the conversion workspace, and you can proceed to the next steps.

- In the left pane, above 2 schemas, click on SQL Server to see that previously visible warnings have been resolved.

Task 5. Start a continuous migration job using Database Migration Service

In Task 1, you configured the Cloud SQL for SQL Server instance to support CDC for continuous migration. Now that the schema and code are prepared, you create a continuous migration job to move the data from Cloud SQL for SQL Server to AlloyDB for PostgreSQL and ensure ongoing synchronization with minimal downtime.

Create and start the continuous migration job

-

In the Database Migration menu (left), select Migration jobs.

-

Click + Create migration job, and enter the required information:

| Property |

Value |

| Migration job name |

demo-migration-job |

| Migration job ID |

Keep the auto-generated value |

| Source database engine |

Under SQL Server, select Cloud SQL for SQL Server. |

| Destination database engine |

Select AlloyDB for PostgreSQL. |

| Destination region |

|

| Migration job type |

Select Continuous. |

Note: For one-time migration jobs, you select One-time for the migration job type.

-

Click Save & continue.

-

On the Define a source tab, for the Source connection profile, select sqlserver-source-profile, and click Save & continue.

-

On the Define a destination tab, for the Destination connection profile, select alloydb-destination-profile, and click Save & continue.

-

On the Configure migration objects tab, for Conversion workspace, select sqlserver-to-alloydb-workspace.

-

For Select objects to migrate, select all the objects by clicking in the Filter checkbox next to Object name.

All object names now have enabled checkboxes next to the name.

-

Click Save & continue.

-

Click Create & start job.

In this section, you skip testing the migration job. In an actual production environment, it is recommended to test before running the job.

- When prompted, click Create & start to confirm.

Note: It can take a few minutes for the migration job to create, before you can see the status in the next section.

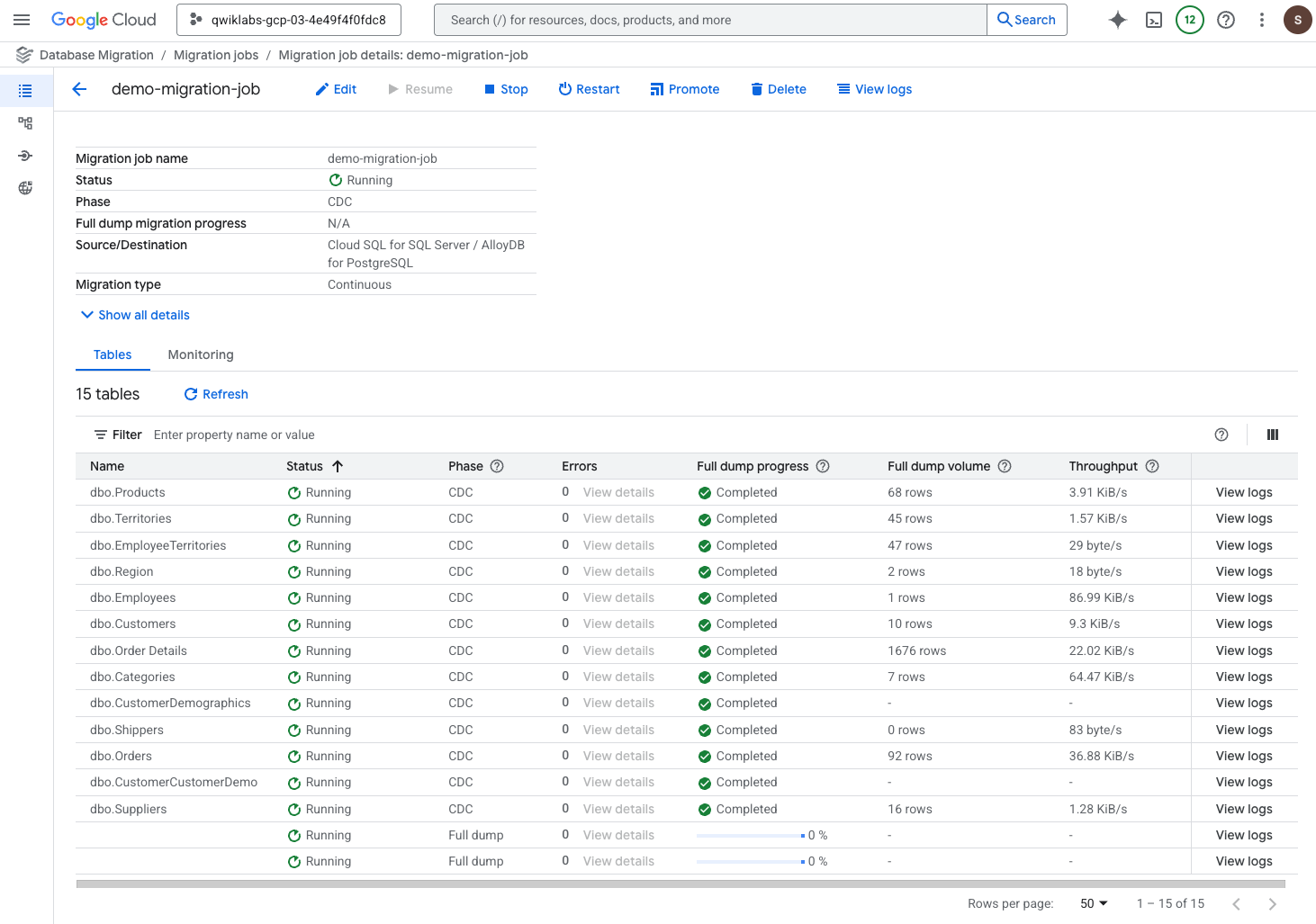

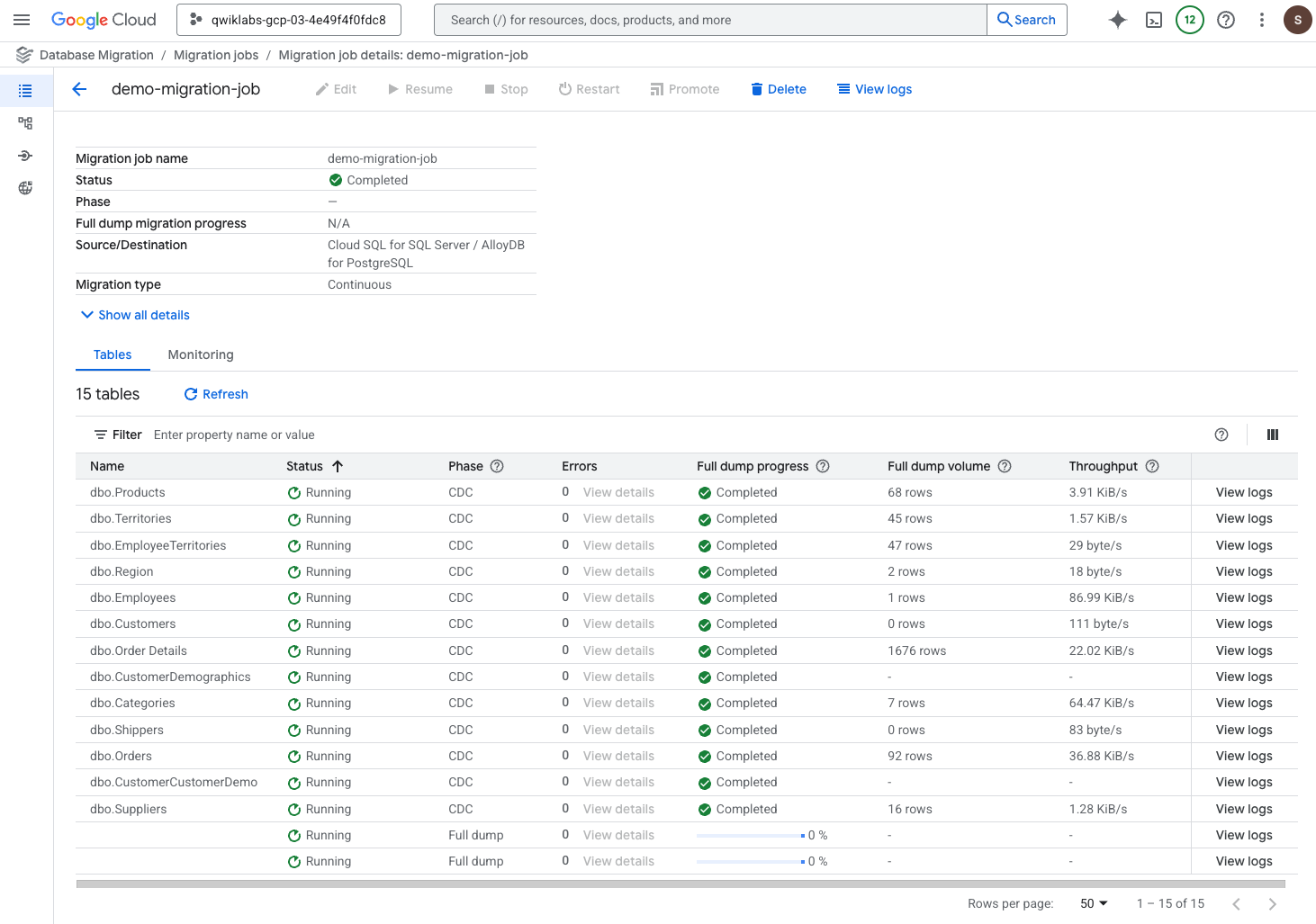

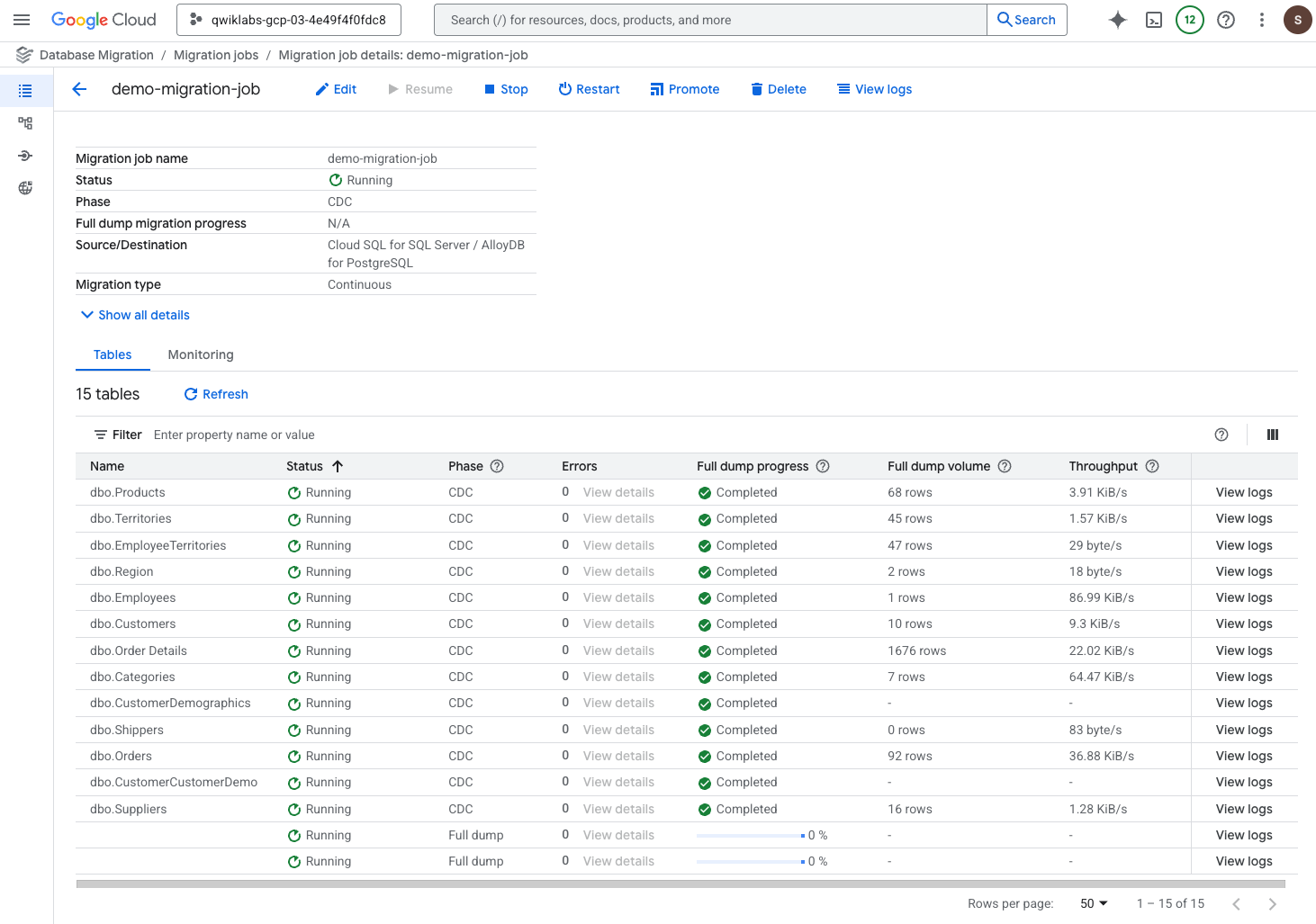

Monitor the migration job

-

On the Migration jobs page, click the migration job named demo-migration-job to see the details page.

-

Review the migration job status.

- After the job has started, the status will show as Starting for several minutes, and then transition to Running.

Note: When the Phase for the job has changed from Full dump to CDC for the job and all tables, you can proceed to the next task.

For one-time migration jobs, you would proceed when the phase has changed from "Full dump" to "Ready to promote".

Leave this browser tab open, and proceed to the next section.

Task 6. Validate and complete the continuous migration job

In this task, you validate the continuous migration job and then complete the job by promoting the destination instance, which disconnects it from the source instance.

Validate the initial data dump

For both one-time and continuous migration jobs, you can validate the initial data dump by running queries for which you can compare the results between the source and destination instances. Recall that in Task 2, you ran some queries for row count; in this section, you repeat these queries but now in the destination instance.

-

In the left menu of this lab instruction page, click on the Open Google Cloud console button again to open a new browser tab for the console using Incognito mode.

-

In the Google Cloud Console, on the Navigation menu ( ), click View all products. Under Databases, click AlloyDB for PostgreSQL.

), click View all products. Under Databases, click AlloyDB for PostgreSQL.

-

On the Clusters page, click on the instance named alloydb-instance-skillsdms.

-

In the AlloyDB menu under Primary cluster, click AlloyDB Studio.

-

Provide the following details to sign in, and click Authenticate.

| Property |

Value |

| Database |

Select dms

|

| Authentication method |

Select Built-in database authentication

|

| User |

Select dms

|

| Password |

Welcome123# |

-

On the AlloyDB Studio page, click the Untitled Query tab to access the query window.

-

To repeat the same query that you ran in the source database (Cloud SQL for SQL Server) in Task 2, copy and paste the following query in the query window, and click Run.

SELECT COUNT(*) FROM dbo.categories;

When the query has executed successfully, you see a message that says Statement executed successfully.

Notice that the same number of rows are returned (8 rows) as the source database (Cloud SQL for SQL Server) which you queried in Task 2.

- Repeat step 6 for a second query of the data:

SELECT COUNT(*) FROM dbo.customers;

When the query has executed successfully, you see a message that says Statement executed successfully.

Notice that the same number of rows are returned (91 rows) as the source database (Cloud SQL for SQL Server) which you queried in Task 2.

Leave this browser tab open, and proceed to the next section.

Validate that CDC is executing successfully

If you were completing a one-time migration job, you would skip this section validating CDC, and proceed to the next section to promote the destination instance as the final step for the migration job.

When CDC is enabled for continuous migration jobs, you can validate that CDC is functioning as expected by making some updates in the source instance and verifying that the changes are being written to the destination instance.

In this section, you verify CDC is executing successfully by adding a new row to the Customers table in the Cloud SQL for SQL Server instance and then query the updated row count in the AlloyDB for PostgreSQL instance.

-

In the left menu of this lab instruction page, click on the Open Google Cloud console button again to open a new browser tab for the console using Incognito mode.

-

In the Google Cloud Console, on the Navigation menu ( ), click Cloud SQL.

), click Cloud SQL.

-

Click on the instance ID called mssql-instance-skillsdms.

-

In the Cloud SQL menu under Primary instance, click Cloud SQL Studio.

-

Provide the following details to sign in, and click Authenticate.

| Property |

Value |

| Database |

Select dms

|

| User |

Select sqlserver

|

| Password |

Welcome123# |

-

On the Cloud SQL Studio page, click the Untitled Query tab to access the query window.

-

To add a new row to the table named Customers, copy and paste the following query in the query window, and click Run.

INSERT INTO [dbo].[Customers] ([CustomerID], [CompanyName], [ContactName], [ContactTitle], [Address], [City], [Region], [PostalCode], [Country], [Phone], [Fax])

VALUES ('TEST1', 'Test CompanyName', 'Test ContactName', 'Test ContactTitle', 'Test Address', 'Test City', 'Test Region', '12345', 'Test Country', 'Test Phone', 'Test Fax');

When the query has executed successfully, you see a message that says Statement executed successfully.

-

Return to the browser tab for AlloyDB for PostgreSQL to verify that the new row has been written to the destination instance.

-

Run the count query again on the Customers table in the destination instance:

SELECT COUNT(*) FROM dbo.customers;

Notice that the returned row count is now 92, which indicates that a new row has been written to the table.

Note: CDC can take a minute or two to fully synchronize the source and destination instance. If the returned row count is not 91, wait a minute and then run the query again.

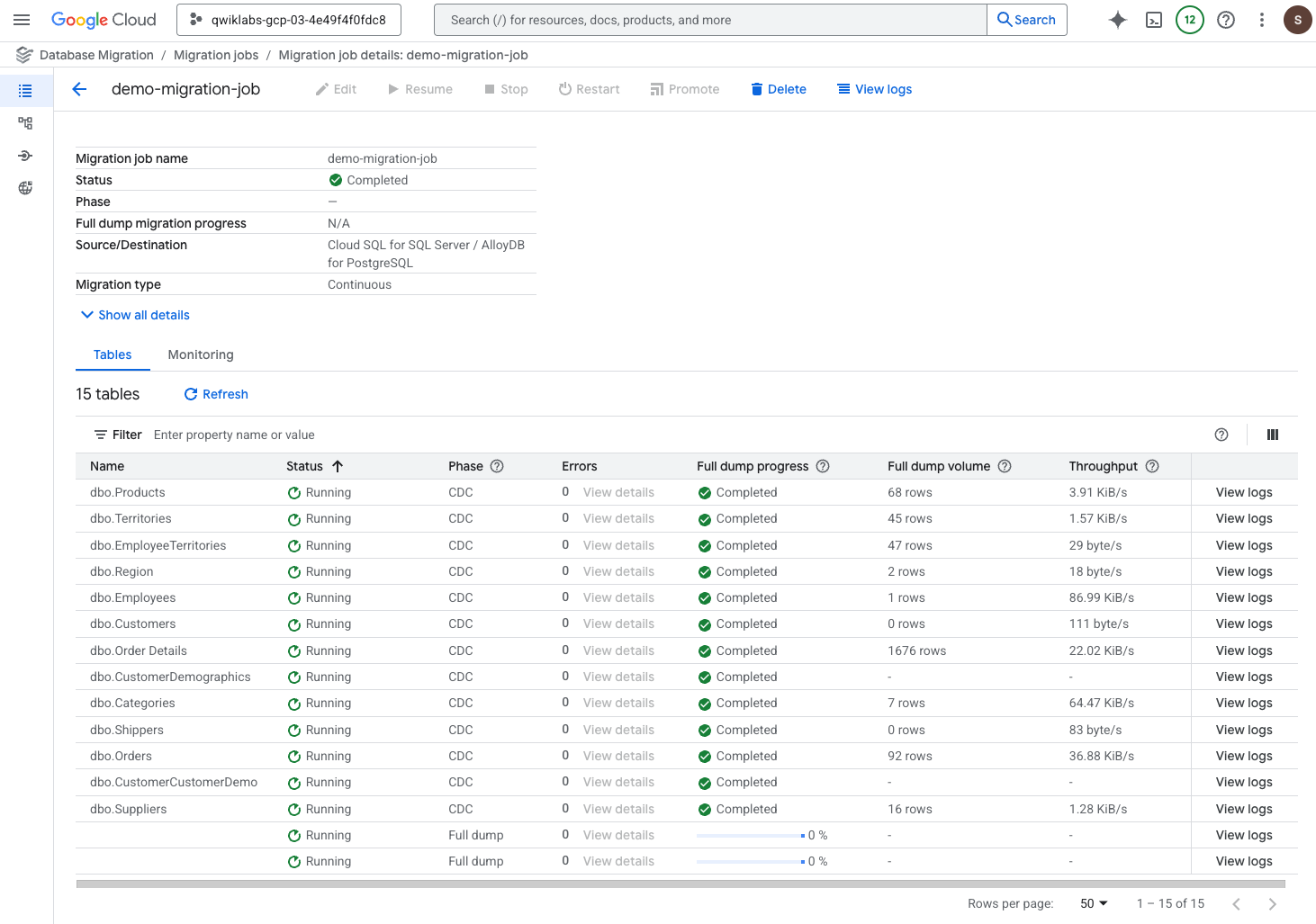

Promote the destination instance

In this final section, you complete the migration job by promoting the destination instance, which disconnects it from the source instance.

-

Return to the browser tab for the Database Migration Service migration job.

-

On the top menu bar of the migration job page, click Promote.

-

When prompted, click Promote to confirm.

Note: When the Phase for the job has changed from Promotion to Completed, the migration job has completed successfully, and you can click on the progress check below to receive the completed score. It can take up to 10 minutes for the migration job page to display an updated Phase value of Completed.

Click Check my progress to verify the objective.

Promote the destination instance

Congratulations!

You have successfully completed a heterogeneous database migration from Cloud SQL for SQL Server to AlloyDB for PostgreSQL using the full feature set of Google Cloud's Database Migration Service, including AI-assisted schema conversion via the Conversion Workspace and a continuous migration job.

Google Cloud training and certification

...helps you make the most of Google Cloud technologies. Our classes include technical skills and best practices to help you get up to speed quickly and continue your learning journey. We offer fundamental to advanced level training, with on-demand, live, and virtual options to suit your busy schedule. Certifications help you validate and prove your skill and expertise in Google Cloud technologies.

Manual Last Updated April 9, 2026

Lab Last Tested April 9, 2026

Copyright 2026 Google LLC. All rights reserved. Google and the Google logo are trademarks of Google LLC. All other company and product names may be trademarks of the respective companies with which they are associated.

), click View all products. Under Databases, click AlloyDB for PostgreSQL.

), click View all products. Under Databases, click AlloyDB for PostgreSQL. ) on the top left to see the Cloud SQL menu options.

) on the top left to see the Cloud SQL menu options.

) to the right of Assist.

) to the right of Assist.